Wapiti08/GraphSec-Flow

GitHub: Wapiti08/GraphSec-Flow

基于 Neo4j 图数据库的开源软件供应链漏洞传播与根因分析工具,支持时间约束下的依赖链追踪和中心性风险度量。

Stars: 0 | Forks: 0

# GraphSec-Flow

OSS 生态系统的时间依赖传播与根因分析

## 结构

- cause:因果分析部分,自定义 DAS 的实现,生成两个包含 CVE 相关特征(一跳邻居,两跳邻居)文件的代码

- cent:三种中心性度量方法:度(三个方向)、介数和特征向量

- data:提取的其他格式数据集

- exp:针对不同文件的探索代码,调用多种中心性度量的代码,用于数据可视化和执行统计分析的 notebooks

- process:调用 neo4j 并导出其他格式图(如 graphml 和 csv)的代码

## 说明

- 如何安装 Goblin Weaver

```

java -Dneo4jUri="bolt://localhost:7687/" -Dneo4jUser="neo4j" -Dneo4jPassword="password" -jar goblinWeaver-2.1.0.jar

```

## 数据导出

- neo4j.conf 配置:将以下行添加到 conf 文件以启用 apoc 输出

```

dbms.security.procedures.unrestricted=apoc.*

dbms.security.procedures.allowlist=apoc.*

apoc.export.file.enabled=true

```

- 运行脚本:

```

# 将 dump 导出为 graphml 和 csv 格式

python3 data_export.py

```

## 运行说明

(已在 macOS 和 Ubuntu 20.04.5 LTS 上针对小规模数据进行测试)

```

# 配置 virtualenv 环境

curl https://pyenv.run | bash

export PYENV_ROOT="$HOME/.pyenv"

[[ -d $PYENV_ROOT/bin ]] && export PATH="$PYENV_ROOT/bin:$PATH"

eval "$(pyenv init -)"

eval "$(pyenv virtualenv-init -)"

# 指定 python 版本

pyenv install 3.10

pyenv global 3.10

# 创建本地环境

pyenv virtualenv 3.10 GraphSec-Flow

eval "$(pyenv init -)"

eval "$(pyenv virtualenv-init -)"

pyenv activate GraphSec-Flow

# 升级构建工具 - 避免兼容性问题

python -m pip install -U pip setuptools wheel build

sudo apt-get update

sudo apt-get install -y build-essential libffi-dev libssl-dev zlib1g-dev \

libbz2-dev libreadline-dev libsqlite3-dev liblzma-dev tk-dev uuid-dev

# 下载依赖

pip3 install -r requirements.txt

```

## 如何使用

- 生成 CVE 增强依赖图

```

cd cve

python3 graph_cve.py --dep_graph {your local path}/data/dep_graph.pkl --cve_json {your local path}/data/aggregated_data.json --nodes_pkl {your local path}/data/graph_nodes_edges.pkl --augment_graph {your local path}/data/dep_graph_cve.pkl

```

- 生成基准真值数据

```

# 深度为 3 且无时间限制:

python3 gt_builder_parallel.py --dep-graph /workspace/GraphSec-Flow/data/dep_graph_cve.pkl --cve-meta /workspace/GraphSec-Flow/data/cve_records_for_meta.pkl --out-root /workspace/GraphSec-Flow/data --out-paths /workspace/GraphSec-Flow/data --no-time-constraint --max-depth 3 --num-workers 64

```

- 根因分析

```

python3 root_ana.py --cve_id "CVE-2017-5650"

```

- 根因路径分析

```

python3 path_track.py --aug_graph /workspace/GraphSec-Flow/data/dep_graph_cve.pkl --paths_jsonl /workspace/GraphSec-Flow/result/result.json --subgraph_gexf /workspace/GraphSec-Flow/result/result.gexf --t_start 1021437154000 --t_end 1724985046000

```

- 生成用于人工验证的节点查找:

```

python3 - << 'EOF'

import pickle, json, csv

from pathlib import Path

print("Loading graph...")

with open('data/dep_graph_cve.pkl', 'rb') as f:

G = pickle.load(f)

node_ids = set()

with open('data/validation/manual_labels_predicted.csv') as f:

for row in csv.DictReader(f):

for nid in row['top_predicted_nodes'].split('|'):

if nid.strip():

node_ids.add(nid.strip())

print(f"Resolving {len(node_ids)} node IDs...")

result = {}

for nid in sorted(node_ids):

if nid in G.nodes:

d = G.nodes[nid]

result[nid] = {

'release': d.get('release', d.get('artifact', d.get('name', '?'))),

'group': d.get('group_id', d.get('groupId', '')),

'artifact':d.get('artifact_id', d.get('artifactId', '')),

'version': d.get('version', ''),

'has_cve': d.get('has_cve', False),

}

else:

result[nid] = {'release': 'NOT FOUND'}

with open('data/validation/node_lookup.json', 'w') as f:

json.dump(result, f, indent=2)

for nid, info in result.items():

r = info.get('release','')

g = info.get('group','')

a = info.get('artifact','')

v = info.get('version','')

label = f"{g}:{a}:{v}" if g and a else r

print(f" {nid:15s} → {label}")

print(f"\n✓ Saved to data/validation/node_lookup.json")

EOF

```

```

- Benchmark

```

nohup python bench/benchmark.py --dep-graph data/dep_graph_cve_2hop_random.pkl --ref-layer data/ref_paths_layer_full_6.jsonl --node-texts data/nodeid_to_texts.pkl --cve-meta data/cve_records_for_meta.pkl --per-cve data/per_cve_scores.pkl --node-scores data/node_cve_scores.pkl > logs/benchmark_2hop_6_random_opt.txt 2>&1 &

```

- Small Scale Validation Benchmark

```

# 针对小规模图

nohup python bench/benchmark_opt.py --dep-graph data/dep_graph_cve_2hop.pkl --ref-layer data/ref_paths_layer_3.jsonl --node-texts data/nodeid_to_texts.pkl --cve-meta data/cve_records_for_meta.pkl --per-cve data/per_cve_scores.pkl --node-scores data/node_cve_scores.pkl > logs/benchmark_2hop_g3_baseline.txt 2>&1 &

# 针对全量图

nohup python bench/benchmark_opt.py --dep-graph data/dep_graph_cve.pkl --ref-layer data/ref_paths_layer_3.jsonl --node-texts data/nodeid_to_texts.pkl --cve-meta data/cve_records_for_meta.pkl --per-cve data/per_cve_scores.pkl --node-scores data/node_cve_scores.pkl > logs/benchmark_g3_baseline.txt 2>&1 &

# 针对小规模图

nohup python bench/benchmark_opt.py --dep-graph data/dep_graph_cve_2hop_random.pkl --ref-layer data/ref_paths_layer_3.jsonl --node-texts data/nodeid_to_texts.pkl --cve-meta data/cve_records_for_meta.pkl --per-cve data/per_cve_scores.pkl --node-scores data/node_cve_scores.pkl > logs/benchmark_2hop_g3_random.txt 2>&1 &

# 针对全量图

nohup python bench/benchmark_opt.py --dep-graph data/validation/dep_graph_cve_random_timestamps.pkl --ref-layer data/ref_paths_layer_3.jsonl --node-texts data/nodeid_to_texts.pkl --cve-meta data/cve_records_for_meta.pkl --per-cve data/per_cve_scores.pkl --node-scores data/node_cve_scores.pkl > logs/benchmark_g3_random.txt 2>&1 &

```

- Batch Prediction

```

nohup python3 validation/batch_predict.py --max-cves 100 > batch_predict_100.txt 2>&1 &

```

- Actionability Test

```

# 需要先完成 batch_predict 才能生成结果

python validation/actionability.py -k_values 1 3 5 10 15 > logs/actionability_small_ks.txt

```

- Depth Validation (on sub graph)

```

python validation/depth_ablation.py \

--dep-graph data/dep_graph_cve_sub.pkl \

--cve-meta data/cve_records_for_meta.pkl \

--predictions data/validation/predictions.json \

--depths 2 3 4 6 8 \

--out data/validation/depth_ablation_sub.json \

2>&1 | tee logs/depth_ablation_sub.log

```

-

## Ground-truth 构建(silver, inferred)

We build a **silver** ground truth for evaluation using (i) earliest-affected release selection from OSV/NVD metadata and

(ii) a time-respecting, depth-bounded traversal to generate reference propagation edges. This GT is **inferred** (not manually verified).

### Algorithm 1:根因推断(最早的易受攻击版本)

**Input:** vulnerability metadata (affected ranges `R`, optional fixing commits `F`, publication time), dependency graph `G`

**Output:** inferred root-cause release node `r`

1. Resolve package id `p` from the advisory (name / repo URL).

2. Normalize semantic versions in affected ranges `R`.

3. Collect candidate releases `S = { s in G | package(s)=p and version(s) in R }`.

4. For each `s in S`, get release time `t(s)`.

5. Return `r = argmin_{s in S} t(s)`.

### Algorithm 2:Reference 传播路径生成(深度受限)

**Input:** root `r`, graph `G`, max depth `d_max`

**Output:** reference edge set `P`

1. Initialize queue `Q = [(r,0)]`, set `P = ∅`.

2. While `Q` not empty:

- Pop `(u,d)`. If `d == d_max`, continue.

- For each downstream dependent release `v` of `u` in `G`:

- If `release_time(v) >= release_time(u)`:

- Add edge `(u → v)` to `P`

- Push `(v, d+1)` into `Q`

3. Return `P`

See `docs/ground_truth.md` for the full LaTeX version and validation checks.

## 统计分析(补充材料)

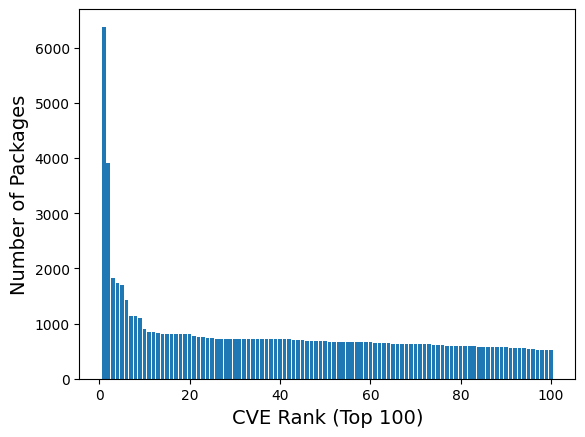

- Distributed of Number of Packages per CVE (Top 100):

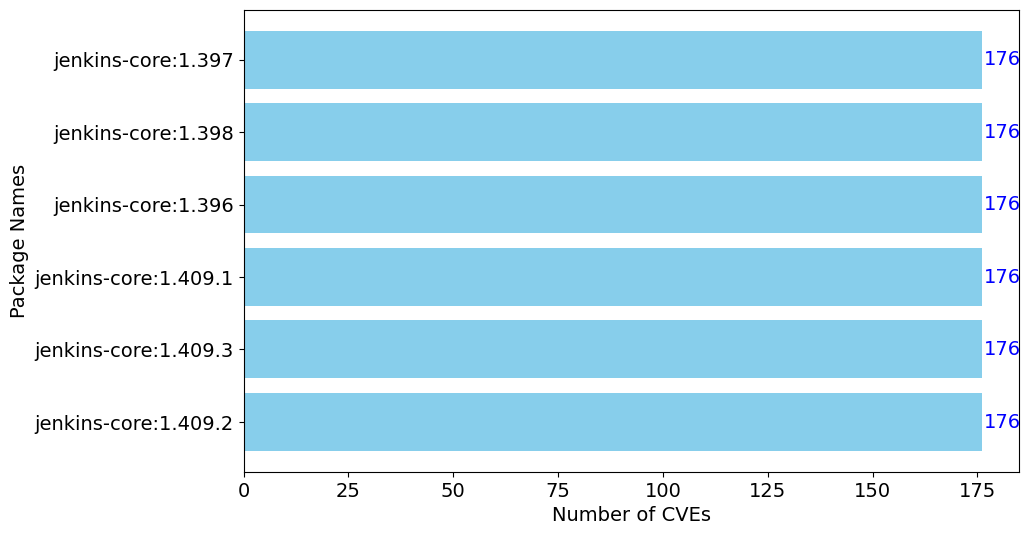

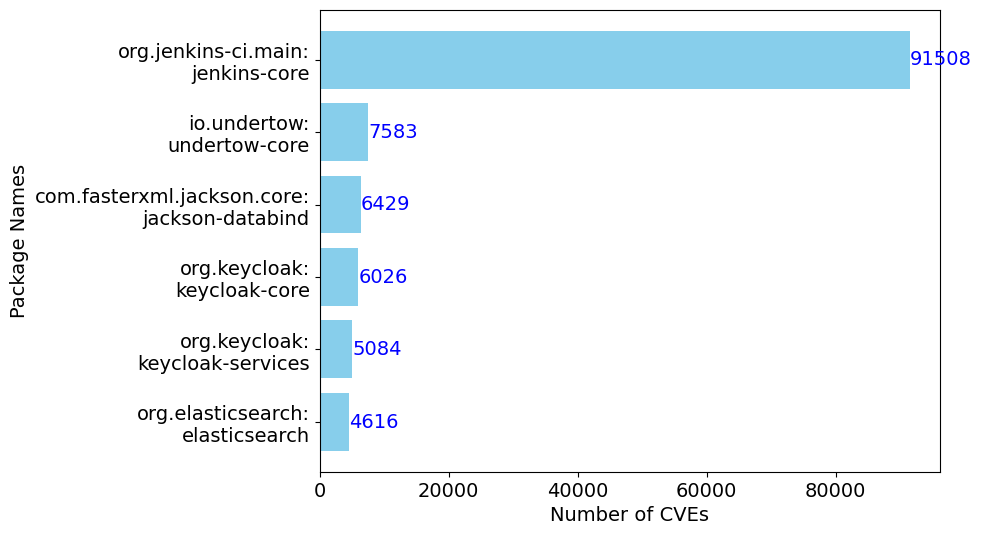

- Releases by number of CVEs (Top 6):

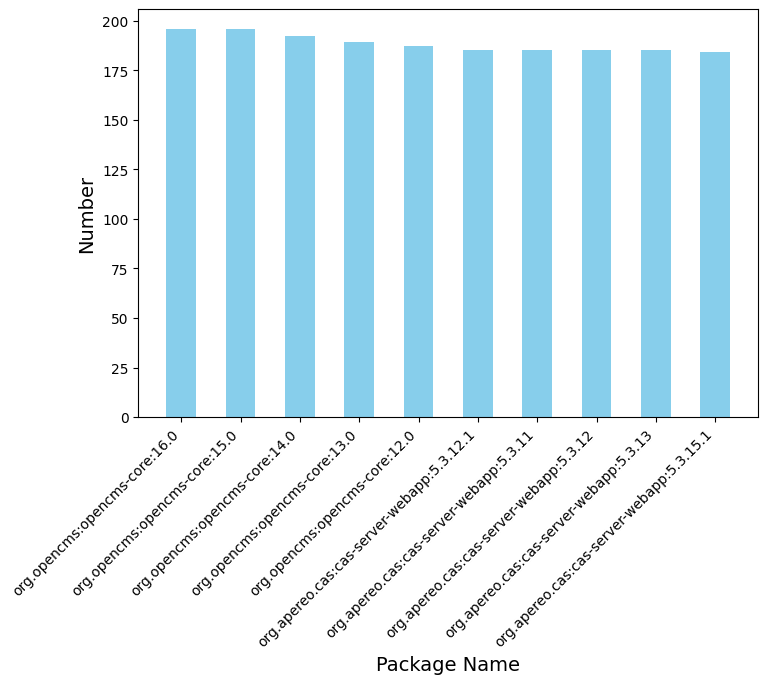

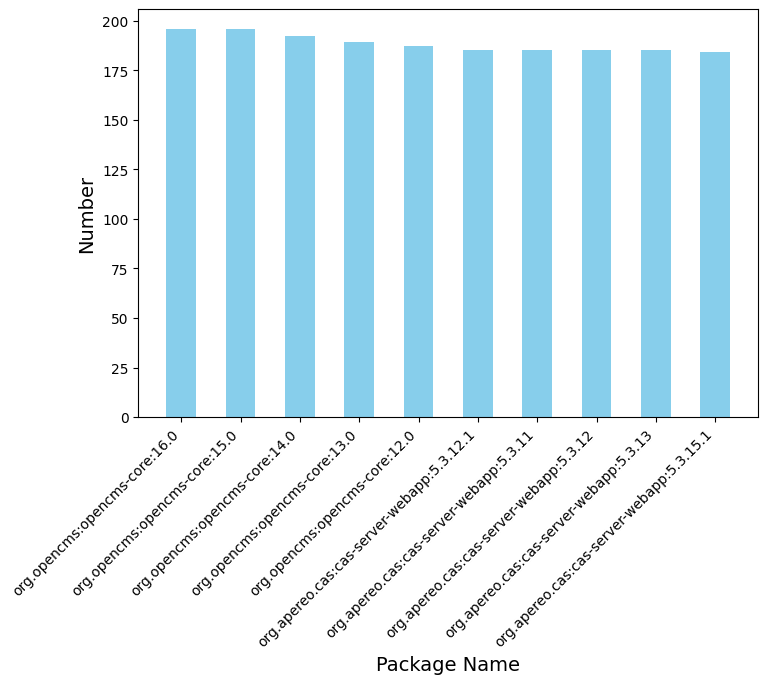

- Top 10 Packages with Vulnerable Releases:

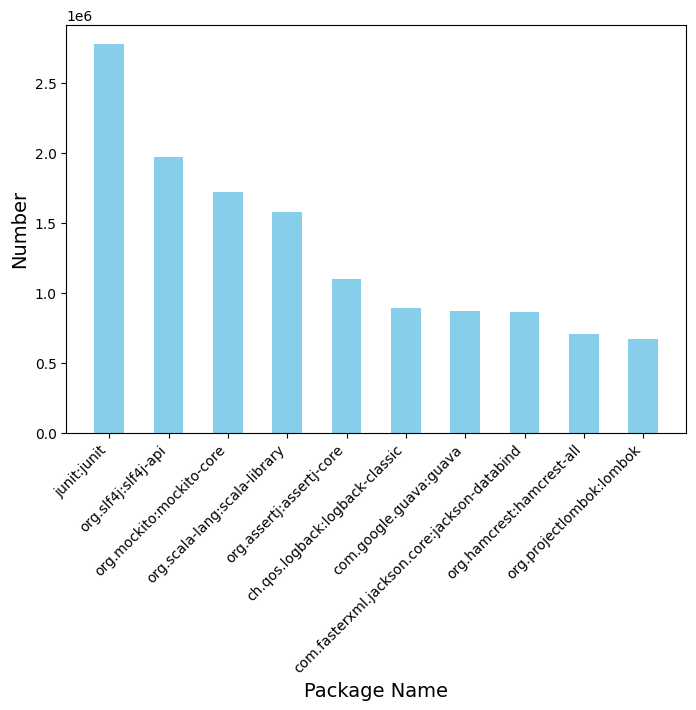

- Top 10 Packages with Highest Degree Centrality:

- Top 10 Vulnerable Releases with Highest Out-degree:

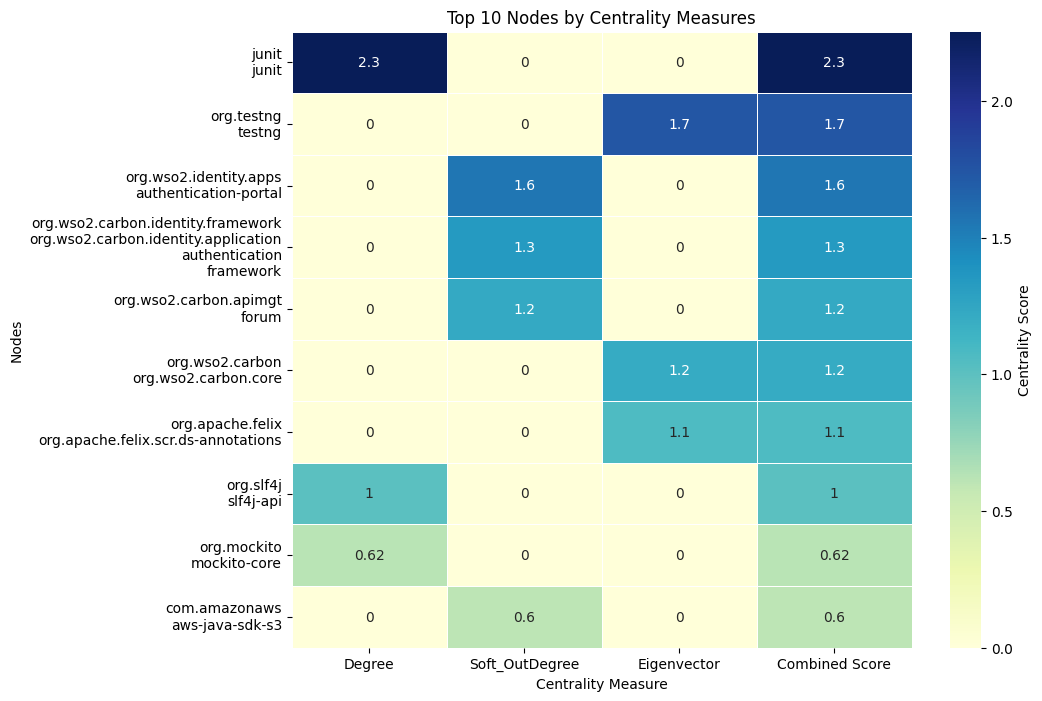

- Top 10 Nodes Heatmap:

- Package by number of CVEs:

```

标签:CVE分析, DAS算法, Goblin Weaver, GraphML, JS文件枚举, Neo4j, Python, 中心性测量, 依赖传播分析, 图论, 图谱可视化, 开源软件供应链, 数据导出, 文档安全, 无后门, 时序依赖, 根本原因分析, 生态安全, 软件开发工具包, 逆向工具