HDT3213/rdb

GitHub: HDT3213/rdb

一个用Go实现的Redis RDB解析器,支持内存分析、格式转换(JSON/AOF)、大key定位和火焰图可视化,适用于运维排查和二次开发。

Stars: 599 | Forks: 137

[](https://pkg.go.dev/github.com/hdt3213/rdb)

[](https://github.com/HDT3213/rdb/actions?query=branch%3Amaster) [](https://coveralls.io/github/HDT3213/rdb?branch=master) [](https://goreportcard.com/report/github.com/hdt3213/rdb)

[](https://github.com/avelino/awesome-go) [中文版](https://github.com/HDT3213/rdb/blob/master/README_CN.md) 这是一个用 Golang 实现的 Redis RDB 解析器,用于二次开发和内存分析。 它提供以下功能: - 为 RDB 文件生成内存报告 - 将 RDB 文件转换为 JSON - 将 RDB 文件转换为 Redis 序列化协议(或 AOF 文件) - 在 RDB 文件中查找最大的 N 个 key - 绘制火焰图,分析哪类 key 占用了最多的内存 - 自定义数据使用 - 生成 RDB 文件 支持的 RDB 版本:1 <= version <= 12 (Redis 7.2) 如果你懂中文,可以在这里找到关于 RDB 文件格式的详尽介绍:[Golang 实现 Redis(11): RDB 文件格式](https://www.cnblogs.com/Finley/p/16251360.html) 感谢 sripathikrishnan 的 [redis-rdb-tools](https://github.com/sripathikrishnan/redis-rdb-tools) # 安装 如果你已经在电脑上安装了 `go`,只需简单地使用: ``` go install github.com/hdt3213/rdb@latest ``` ### 包管理器 如果你是 [Homebrew](https://brew.sh/) 用户,可以通过以下方式安装 [rdb](https://formulae.brew.sh/formula/rdb): ``` $ brew install rdb ``` 或者,你可以从 [releases](https://github.com/HDT3213/rdb/releases) 下载可执行二进制文件,并将其路径添加到 PATH 环境变量中。 在终端中使用 `rdb` 命令,你可以看到它的手册: ``` $ rdb This is a tool to parse Redis' RDB files Options: -c command, including: json/memory/aof/bigkey/prefix/flamegraph -o output file path -n number of result, using in command: bigkey/prefix -port listen port for flame graph web service -sep separator for flamegraph, rdb will separate key by it, default value is ":". supporting multi separators: -sep sep1 -sep sep2 -regex using regex expression filter keys -expire filter keys by its expiration time 1. '1751731200~1751817600' get keys with expiration time in range [1751731200, 1751817600] 2. '1751731200~now' 'now~1751731200' magic variable 'now' represents the current timestamp 3. '1751731200~inf' 'now~inf' magic variable 'inf' represents the Infinity 4. 'noexpire' get keys without expiration time 5. 'anyexpire' get all keys with expiration time -size filter keys by size, supports B/KB/MB/GB/TB/PB/EB 1. '1KB~1MB' get keys with size in range [1KB, 1MB] 2. '10MB~inf' magic variable 'inf' represents the Infinity 3. '1024~10KB' get keys with size in range [0Bytes, 10KB] -concurrent The number of concurrent json converters. 4 by default. -show-global-meta Show global meta likes redis-verion/ctime/functions -no-expired filter expired keys(deprecated, please use 'expire' option) Examples: parameters between '[' and ']' is optional 1. convert rdb to json rdb -c json -o dump.json dump.rdb 2. generate memory report rdb -c memory -o memory.csv dump.rdb 3. convert to aof file rdb -c aof -o dump.aof dump.rdb 4. get largest keys rdb -c bigkey [-o dump.aof] [-n 10] dump.rdb 5. get number and memory size by prefix rdb -c prefix [-n 10] [-max-depth 3] [-o prefix-report.csv] dump.rdb 6. draw flamegraph rdb -c flamegraph [-port 16379] [-sep :] dump.rdb ``` # 转换为 Json 用法: ``` rdb -c json -o

```

示例:

```

rdb -c json -o intset_16.json cases/intset_16.rdb

```

你可以在 [cases](https://github.com/HDT3213/rdb/tree/master/cases) 中获取一些 rdb 示例。

JSON 结果示例:

```

[

{"db":0,"key":"hash","size":64,"type":"hash","hash":{"ca32mbn2k3tp41iu":"ca32mbn2k3tp41iu","mddbhxnzsbklyp8c":"mddbhxnzsbklyp8c"}},

{"db":0,"key":"string","size":10,"type":"string","value":"aaaaaaa"},

{"db":0,"key":"expiration","expiration":"2022-02-18T06:15:29.18+08:00","size":8,"type":"string","value":"zxcvb"},

{"db":0,"key":"list","expiration":"2022-02-18T06:15:29.18+08:00","size":66,"type":"list","values":["7fbn7xhcnu","lmproj6c2e","e5lom29act","yy3ux925do"]},

{"db":0,"key":"zset","expiration":"2022-02-18T06:15:29.18+08:00","size":57,"type":"zset","entries":[{"member":"zn4ejjo4ths63irg","score":1},{"member":"1ik4jifkg6olxf5n","score":2}]},

{"db":0,"key":"set","expiration":"2022-02-18T06:15:29.18+08:00","size":39,"type":"set","members":["2hzm5rnmkmwb3zqd","tdje6bk22c6ddlrw"]}

]

```

你可以使用 `-concurrent` 来更改并发转换器的数量。默认值为 4。

```

rdb -c json -o intset_16.json -concurrent 8 cases/intset_16.rdb

```

你可以使用 `-show-global-meta` 获取 RDB 文件中的元数据(redis-ver, ctime, used-mem 等)和函数。

```

rdb -c json -o function.json -show-global-meta cases/function.rdb

```

示例:

```

[

{"db":0,"key":"redis-ver","size":0,"type":"aux","encoding":"","value":"7.2.5"},

{"db":0,"key":"redis-bits","size":0,"type":"aux","encoding":"","value":"64"},

{"db":0,"key":"ctime","size":0,"type":"aux","encoding":"","value":"1767107423"},

{"db":0,"key":"used-mem","size":0,"type":"aux","encoding":"","value":"1269264"},

{"db":0,"key":"aof-base","size":0,"type":"aux","encoding":"","value":"0"},

{"db":0,"key":"functions","size":0,"type":"functions","encoding":"functions","functionsLua":"#!lua name=mylib\nredis.register_function('myfunc', function(keys, args) return 'hello' end)"}

]

```

# 生成内存报告

RDB 使用 RDB 编码大小来估算 Redis 内存使用情况。

```

rdb -c memory -o

```

示例:

```

rdb -c memory -o mem.csv cases/memory.rdb

```

CSV 结果示例:

```

database,key,type,size,size_readable,element_count

0,hash,hash,64,64B,2

0,s,string,10,10B,0

0,e,string,8,8B,0

0,list,list,66,66B,4

0,zset,zset,57,57B,2

0,large,string,2056,2K,0

0,set,set,39,39B,2

```

# 按前缀分析

如果你可以根据 key 的前缀区分模块,例如,用户数据的 key 是 `User:`,Post 的 key 是 `Post:`,用户统计是 `Stat:User:???`,Post 统计是 `Stat:Post:???`。那么我们可以通过前缀分析获取每个模块的状态:

```

database,prefix,size,size_readable,key_count

0,Post:,1170456184,1.1G,701821

0,Stat:,405483812,386.7M,3759832

0,Stat:Post:,291081520,277.6M,2775043

0,User:,241572272,230.4M,265810

0,Topic:,171146778,163.2M,694498

0,Topic:Post:,163635096,156.1M,693758

0,Stat:Post:View,133201208,127M,1387516

0,Stat:User:,114395916,109.1M,984724

0,Stat:Post:Comment:,80178504,76.5M,693758

0,Stat:Post:Like:,77701688,74.1M,693768

```

格式:

```

rdb -c prefix [-n ] [-max-depth ] -o

```

- 前缀分析结果按内存空间降序排列。`-n` 选项可以指定输出数量。默认输出所有。

- `-max-depth` 可以限制前缀树的最大深度。在上面的例子中,`Stat:` 的深度是 1,`Stat:User:` 和 `Stat:Post:` 的深度是 2。

示例:

```

rdb -c prefix -n 10 -max-depth 2 -o prefix.csv cases/memory.rdb

```

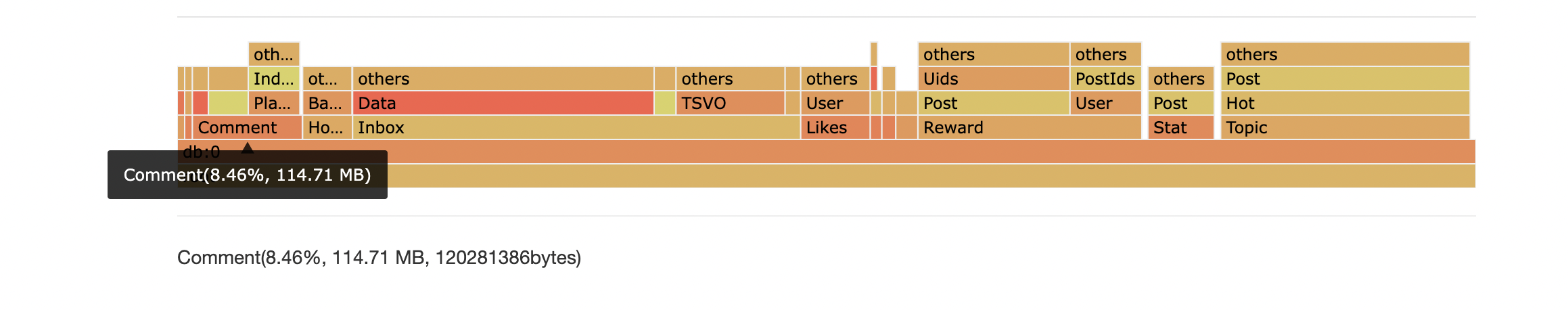

# 火焰图

在很多情况下,并不是少数几个非常大的 key,而是大量的小 key 占用了大部分内存。

RDB 工具可以根据给定的分隔符分隔 key,然后聚具有相同前缀的 key。

最后 RDB 工具将结果呈现为火焰图,通过它你可以找出哪种类型的 key 消耗了最多的

内存。

在这个例子中,模式为 `Comment:*` 的 key 使用了 8.463% 的内存。

用法:

```

rdb -c flamegraph [-port ] [-sep ] [-sep ]

```

示例:

```

rdb -c flamegraph -port 16379 -sep : dump.rdb

```

# 查找最大的 Key

RDB 可以在文件中查找最大的 N 个 key

```

rdb -c bigkey -n

```

示例:

```

rdb -c bigkey -n 5 cases/memory.rdb

```

CSV 结果示例:

```

database,key,type,size,size_readable,element_count

0,large,string,2056,2K,0

0,list,list,66,66B,4

0,hash,hash,64,64B,2

0,zset,zset,57,57B,2

0,set,set,39,39B,2

```

# 转换为 AOF

用法:

```

rdb -c aof -o

```

示例:

```

rdb -c aof -o mem.aof cases/memory.rdb

```

AOF 结果示例:

```

*3

$3

SET

$1

s

$7

aaaaaaa

```

# 正则过滤

RDB 工具支持使用正则表达式过滤 key。

示例:

```

rdb -c json -o regex.json -regex '^l.*' cases/memory.rdb

```

# 大小过滤

可以配置 `-size` 参数根据对象大小(字节)进行过滤。

- 支持的单位:KB/MB/GB/TB/PB/EB。二进制和 SI 前缀都表示以 2 为基数的单位:

- KB = K = KiB = 1024

- MB = M = MiB = 1024 × KB

- GB = G = GiB = 1024 × MB

- TB = T = TiB = 1024 × GB

- PB = P = PiB = 1024 × TB

- EB = E = EiB = 1024 × PB

- 使用范围格式 `min~max` 和魔法变量 `inf` 表示无穷大。

示例:

```

# 大小在 1KB 和 1MB (含) 之间的 Key

rdb -c json -o dump.json -size 1KB~1MB cases/memory.rdb

# 大小大于或等于 10MB 的 Key

rdb -c memory -o memory.csv -size 10MB~inf cases/memory.rdb

```

# 过期时间过滤

可以配置 `-expire` 参数根据过期时间进行过滤。

过期时间在 2025-07-06 00:00:00 和 2025-07-07 00:00:00 之间的 key:

```

# toTimestamp(2025-07-06 00:00:00) == 1751731200

# toTimestamp(2025-07-07 00:00:00) == 1751817600

rdb -c json -o dump.json -expire 1751731200~1751817600 cases/expiration.rdb

```

```

# 过期时间早于 2025-07-07 00:00:00 的 Key

rdb -c json -o dump.json -expire 0~1751817600 cases/expiration.rdb

```

魔法变量 `inf` 表示无穷大:

```

rdb -c json -o dump.json -expire 1751731200~inf cases/expiration.rdb

```

魔法变量 `now` 表示当前时间:

```

# 过期时间早于现在的 Key

rdb -c json -o dump.json -expire 0~now cases/expiration.rdb

```

```

# 过期时间晚于现在的 Key

rdb -c json -o dump.json -expire now~inf cases/expiration.rdb

```

所有设置了过期时间的 key:

```

rdb -c json -o dump.json -expire anyexpire cases/expiration.rdb

```

所有未设置过期时间的 key:

```

rdb -c json -o dump.json -expire noexpire cases/expiration.rdb

```

# 自定义数据使用

```

package main

import (

"github.com/hdt3213/rdb/parser"

"os"

)

func main() {

rdbFile, err := os.Open("dump.rdb")

if err != nil {

panic("open dump.rdb failed")

}

defer func() {

_ = rdbFile.Close()

}()

decoder := parser.NewDecoder(rdbFile)

err = decoder.Parse(func(o parser.RedisObject) bool {

switch o.GetType() {

case parser.StringType:

str := o.(*parser.StringObject)

println(str.Key, str.Value)

case parser.ListType:

list := o.(*parser.ListObject)

println(list.Key, list.Values)

case parser.HashType:

hash := o.(*parser.HashObject)

println(hash.Key, hash.Hash)

case parser.ZSetType:

zset := o.(*parser.ZSetObject)

println(zset.Key, zset.Entries)

case parser.StreamType:

stream := o.(*parser.StreamObject)

println(stream.Entries, stream.Groups)

}

// return true to continue, return false to stop the iteration

return true

})

if err != nil {

panic(err)

}

}

```

# 生成 RDB 文件

该库可以生成 RDB 文件:

```

package main

import (

"github.com/hdt3213/rdb/encoder"

"github.com/hdt3213/rdb/model"

"os"

"time"

)

func main() {

rdbFile, err := os.Create("dump.rdb")

if err != nil {

panic(err)

}

defer rdbFile.Close()

enc := encoder.NewEncoder(rdbFile)

err = enc.WriteHeader()

if err != nil {

panic(err)

}

auxMap := map[string]string{

"redis-ver": "4.0.6",

"redis-bits": "64",

"aof-preamble": "0",

}

for k, v := range auxMap {

err = enc.WriteAux(k, v)

if err != nil {

panic(err)

}

}

err = enc.WriteDBHeader(0, 5, 1)

if err != nil {

panic(err)

}

expirationMs := uint64(time.Now().Add(time.Hour*8).Unix() * 1000)

err = enc.WriteStringObject("hello", []byte("world"), encoder.WithTTL(expirationMs))

if err != nil {

panic(err)

}

err = enc.WriteListObject("list", [][]byte{

[]byte("123"),

[]byte("abc"),

[]byte("la la la"),

})

if err != nil {

panic(err)

}

err = enc.WriteSetObject("set", [][]byte{

[]byte("123"),

[]byte("abc"),

[]byte("la la la"),

})

if err != nil {

panic(err)

}

err = enc.WriteHashMapObject("list", map[string][]byte{

"1": []byte("123"),

"a": []byte("abc"),

"la": []byte("la la la"),

})

if err != nil {

panic(err)

}

err = enc.WriteZSetObject("list", []*model.ZSetEntry{

{

Score: 1.234,

Member: "a",

},

{

Score: 2.71828,

Member: "b",

},

})

if err != nil {

panic(err)

}

err = enc.WriteEnd()

if err != nil {

panic(err)

}

}

```

# 基准测试

在 MacBook Air(M2,2022年)上测试,使用来自生产环境中 Redis 5.0 的 1.3 GB v9 格式编码 RDB 文件。

|usage|elapsed|speed|

|:-:|:-:|:-:|

|ToJson|25s|53.24MB/s|

|Memory|10s|133.12MB/s|

|AOF|25s|53.24MB/s|

|Top10|6s|221.87MB/s|

|Prefix|25s|53.24MB/s|

[](https://github.com/HDT3213/rdb/actions?query=branch%3Amaster) [](https://coveralls.io/github/HDT3213/rdb?branch=master) [](https://goreportcard.com/report/github.com/hdt3213/rdb)

[](https://github.com/avelino/awesome-go) [中文版](https://github.com/HDT3213/rdb/blob/master/README_CN.md) 这是一个用 Golang 实现的 Redis RDB 解析器,用于二次开发和内存分析。 它提供以下功能: - 为 RDB 文件生成内存报告 - 将 RDB 文件转换为 JSON - 将 RDB 文件转换为 Redis 序列化协议(或 AOF 文件) - 在 RDB 文件中查找最大的 N 个 key - 绘制火焰图,分析哪类 key 占用了最多的内存 - 自定义数据使用 - 生成 RDB 文件 支持的 RDB 版本:1 <= version <= 12 (Redis 7.2) 如果你懂中文,可以在这里找到关于 RDB 文件格式的详尽介绍:[Golang 实现 Redis(11): RDB 文件格式](https://www.cnblogs.com/Finley/p/16251360.html) 感谢 sripathikrishnan 的 [redis-rdb-tools](https://github.com/sripathikrishnan/redis-rdb-tools) # 安装 如果你已经在电脑上安装了 `go`,只需简单地使用: ``` go install github.com/hdt3213/rdb@latest ``` ### 包管理器 如果你是 [Homebrew](https://brew.sh/) 用户,可以通过以下方式安装 [rdb](https://formulae.brew.sh/formula/rdb): ``` $ brew install rdb ``` 或者,你可以从 [releases](https://github.com/HDT3213/rdb/releases) 下载可执行二进制文件,并将其路径添加到 PATH 环境变量中。 在终端中使用 `rdb` 命令,你可以看到它的手册: ``` $ rdb This is a tool to parse Redis' RDB files Options: -c command, including: json/memory/aof/bigkey/prefix/flamegraph -o output file path -n number of result, using in command: bigkey/prefix -port listen port for flame graph web service -sep separator for flamegraph, rdb will separate key by it, default value is ":". supporting multi separators: -sep sep1 -sep sep2 -regex using regex expression filter keys -expire filter keys by its expiration time 1. '1751731200~1751817600' get keys with expiration time in range [1751731200, 1751817600] 2. '1751731200~now' 'now~1751731200' magic variable 'now' represents the current timestamp 3. '1751731200~inf' 'now~inf' magic variable 'inf' represents the Infinity 4. 'noexpire' get keys without expiration time 5. 'anyexpire' get all keys with expiration time -size filter keys by size, supports B/KB/MB/GB/TB/PB/EB 1. '1KB~1MB' get keys with size in range [1KB, 1MB] 2. '10MB~inf' magic variable 'inf' represents the Infinity 3. '1024~10KB' get keys with size in range [0Bytes, 10KB] -concurrent The number of concurrent json converters. 4 by default. -show-global-meta Show global meta likes redis-verion/ctime/functions -no-expired filter expired keys(deprecated, please use 'expire' option) Examples: parameters between '[' and ']' is optional 1. convert rdb to json rdb -c json -o dump.json dump.rdb 2. generate memory report rdb -c memory -o memory.csv dump.rdb 3. convert to aof file rdb -c aof -o dump.aof dump.rdb 4. get largest keys rdb -c bigkey [-o dump.aof] [-n 10] dump.rdb 5. get number and memory size by prefix rdb -c prefix [-n 10] [-max-depth 3] [-o prefix-report.csv] dump.rdb 6. draw flamegraph rdb -c flamegraph [-port 16379] [-sep :] dump.rdb ``` # 转换为 Json 用法: ``` rdb -c json -o

Json 格式详情

## string ``` { "db": 0, "key": "string", "size": 10, // estimated memory size "type": "string", "expiration":"2022-02-18T06:15:29.18+08:00", "value": "aaaaaaa" } ``` ## list ``` { "db": 0, "key": "list", "expiration": "2022-02-18T06:15:29.18+08:00", "size": 66, "type": "list", "values": [ "7fbn7xhcnu", "lmproj6c2e", "e5lom29act", "yy3ux925do" ] } ``` ## set ``` { "db": 0, "key": "set", "expiration": "2022-02-18T06:15:29.18+08:00", "size": 39, "type": "set", "members": [ "2hzm5rnmkmwb3zqd", "tdje6bk22c6ddlrw" ] } ``` ## hash ``` { "db": 0, "key": "hash", "size": 64, "type": "hash", "expiration": "2022-02-18T06:15:29.18+08:00", "hash": { "ca32mbn2k3tp41iu": "ca32mbn2k3tp41iu", "mddbhxnzsbklyp8c": "mddbhxnzsbklyp8c" } } ``` ## zset ``` { "db": 0, "key": "zset", "expiration": "2022-02-18T06:15:29.18+08:00", "size": 57, "type": "zset", "entries": [ { "member": "zn4ejjo4ths63irg", "score": 1 }, { "member": "1ik4jifkg6olxf5n", "score": 2 } ] } ``` ## stream ``` { "db": 0, "key": "mystream", "size": 1776, "type": "stream", "encoding": "", "version": 3, // Version 2 means is RDB_TYPE_STREAM_LISTPACKS_2, 3 means is RDB_TYPE_STREAM_LISTPACKS_3 // StreamEntry is a node in the underlying radix tree of redis stream, of type listpacks, which contains several messages. There is no need to care about which entry the message belongs to when using it. "entries": [ { "firstMsgId": "1704557973866-0", // ID of the master entry at listpack head "fields": [ // master fields, used for compressing size "name", "surname" ], "msgs": [ // messages in entry { "id": "1704557973866-0", "fields": { "name": "Sara", "surname": "OConnor" }, "deleted": false } ] } ], "groups": [ // consumer groups { "name": "consumer-group-name", "lastId": "1704557973866-0", "pending": [ // pending messages { "id": "1704557973866-0", "deliveryTime": 1704557998397, "deliveryCount": 1 } ], "consumers": [ // consumers in the group { "name": "consumer-name", "seenTime": 1704557998397, "pending": [ "1704557973866-0" ], "activeTime": 1704557998397 } ], "entriesRead": 1 } ], "len": 1, // current number of messages inside this stream "lastId": "1704557973866-0", "firstId": "1704557973866-0", "maxDeletedId": "0-0", "addedEntriesCount": 1 } ``` ## aux ``` [ { "db": 0, "key": "redis-ver", "size": 0, "type": "aux", "encoding": "", "value": "7.2.5" }, { "db": 0, "key": "redis-bits", "size": 0, "type": "aux", "encoding": "", "value": "64" }, { "db": 0, "key": "ctime", "size": 0, "type": "aux", "encoding": "", "value": "1767107423" }, { "db": 0, "key": "used-mem", "size": 0, "type": "aux", "encoding": "", "value": "1269264" }, { "db": 0, "key": "aof-base", "size": 0, "type": "aux", "encoding": "", "value": "0" } ] ``` ## functions ``` { "db": 0, "key": "functions", "size": 0, "type": "functions", "encoding": "functions", "functionsLua": "#!lua name=mylib\nredis.register_function('myfunc', function(keys, args) return 'hello' end)" } ```标签:AOF, Golang, Homebrew安装, JARM, JSON, RDB版本12, RDB解析器, Redis, 二次开发, 内存分析, 后端开发, 大Key检测, 安全编程, 开源库, 性能优化, 搜索引擎爬虫, 数据库工具, 数据转换, 日志审计, 检测绕过, 火焰图, 键值分析