Adelya30stm/AI-powered-RAG-framework-for-automated-cross-platform-SIEM-rule-translation

GitHub: Adelya30stm/AI-powered-RAG-framework-for-automated-cross-platform-SIEM-rule-translation

一个基于 AI 与 RAG 的跨平台 SIEM 规则翻译框架,解决手动迁移规则效率低、易出错的问题。

Stars: 0 | Forks: 0

# AI Rule Translator System - 一个用于自动化跨平台 SIEM 规则翻译的 AI 驱动 RAG 框架

# 具有企业级稳定性的 SPL/KQL 到 YARA-L 的无缝转换,显著降低 SOC 环境中的手动工程开销

## 📋 目录

1. [介绍](#introduction)

2. [系统架构](#system-architecture)

3. [安装与设置](#installation--setup)

4. [项目结构](#project-structure)

5. [工作原理](#how-it-works)

6. [系统组件](#system-components)

7. [使用](#usage)

8. [故障排除](#troubleshooting)

### 1.3 最近更新(2025年12月)

**主要系统增强:**

1. **严格的“仅代码”输出模式:**

- 代理现在配置为仅输出 **纯 YARA-L 代码**。

- 所有会话文本、Markdown 说明和“变更说明”部分已被移除,以简化集成。

- 说明现在仅以 **内联注释** 的形式提供在生成的代码中。

2. **“黄金模板”逻辑:**

- 系统现在优先使用通过 `github_rule_finder` 找到的现有 GitHub 规则的结构。

- 如果找到类似规则,其结构(缩进、变量命名、节排序)将被视为 **源真理**,覆盖通用生成逻辑。

3. **提示工程与控制:**

- **增强的提示控制:** 在系统提示中实现了“MANDATORY COMPLIANCE CHECK”以确保严格遵守约束。

- **消耗监控:** 增加了对提示消耗和令牌使用情况的可见性。

- **语法强制:** 明确禁止无效的 YARA-L 构造(例如 `events` 节中的 `and`、`contains` 运算符),转而使用正确的语法(隐式 AND、`re.regex`)。

4. **性能调优:**

- 降低规则查找的相似度阈值,以增加找到相关模板的可能性。

- 增加上下文限制以检索更多文档。

## 1. Introduction

### 1.1 System Purpose

Rule Translator is an intelligent **AI-powered dual-mode system** that combines RAG (Retrieval-Augmented Generation) architecture with autonomous agent workflows for automated security rule conversion.

**Two Operating Modes:**

1. **Test & Evaluation Mode** (`run_tes.py`) - Rule translation testing and validation

- Tests translation quality by comparing agent output with ground truth YARA-L rules

- Processes KQL/SPL rules from test directories (`rag/app/tests/rules_static/`)

- Outputs results to Excel (`simple_output.xlsx`) for manual review

- JSON output (`output_log.json`) for programmatic analysis

- Interactive CLI prompts for rule language selection

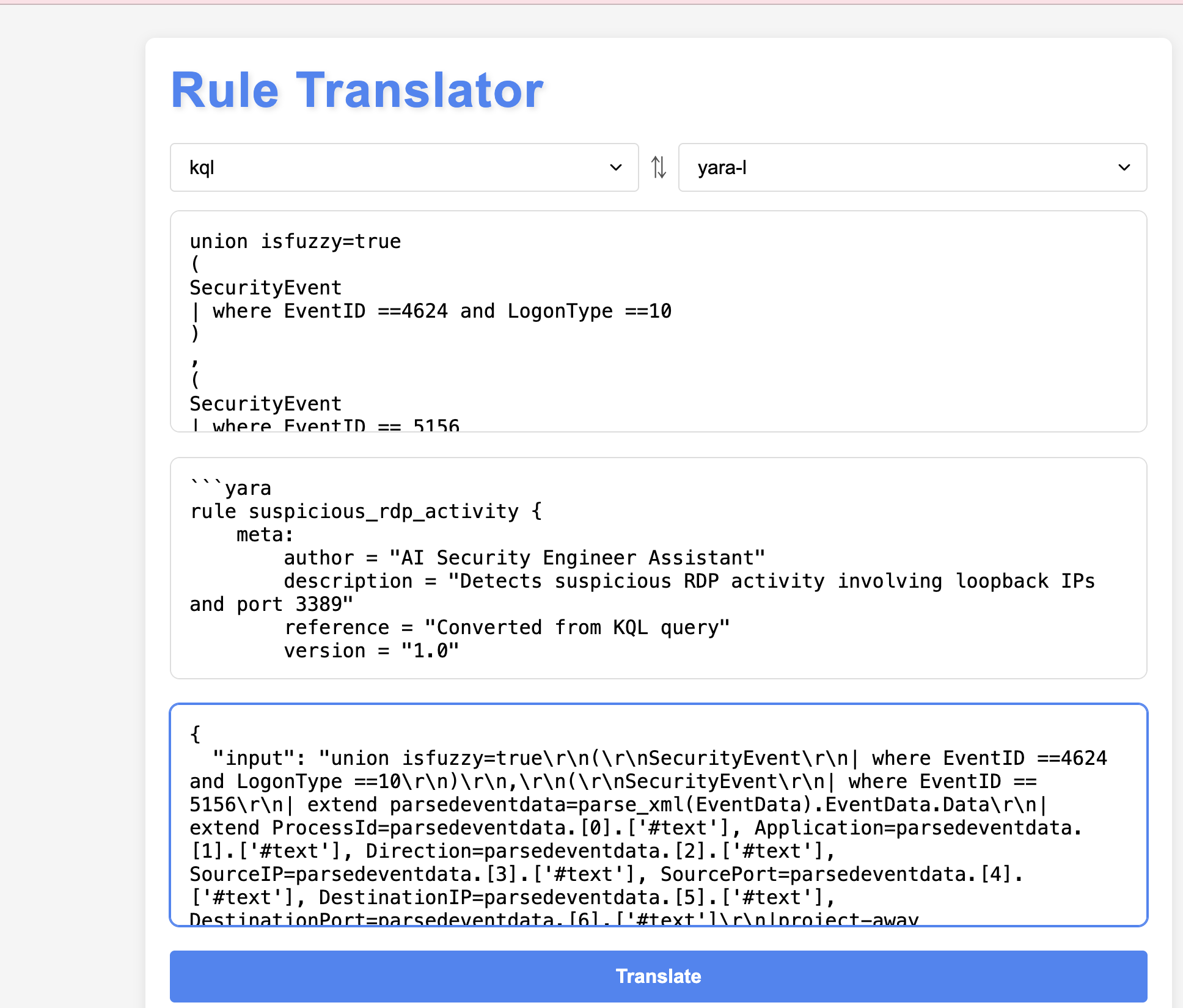

2. **Web Application Mode** - Interactive manual translation

- User-friendly web interface at http://127.0.0.1:8000/

- Real-time rule conversion with instant feedback

- **Language switcher**: Select source format (KQL or SPL) via dropdown

- Copy-paste interface for quick one-off translations

- Debug mode showing agent reasoning steps

**System Architecture:**

- **Test Framework Component:** Translation quality testing with ground truth comparison

- **Agent Component:** LangChain ReAct agent with multi-tool orchestration

- **Knowledge Layer:** Vector database (ChromaDB) with RAG retrieval

**Flexibility & Extensibility:**

- **Primary use case:** SPL/KQL → YARA-L 2.0 for Google Chronicle SecOps

- **Proven adaptability:** Successfully tested for SPL → KQL conversions

- **Easy customization:** Can be configured for any query language pair through:

- Field mapping dictionaries (JSON configuration files)

- Custom prompt templates for new languages

- Knowledge base expansion with new documentation

**Key Competitive Advantages:**

1. **Intelligent Field Mapping** - Pre-configured dictionaries with precise field translations

- KQL → UDM mappings (e.g., `EventID` → `metadata.product_event_type`)

- SPL → UDM mappings (e.g., `src` → `principal.ip`)

- Contextual field selection based on rule logic

2. **RAG-Powered Translation** - Outperforms traditional rule-based translators

- Retrieves relevant syntax documentation on-demand

- Learns from similar rule examples in knowledge base

- Adapts to complex queries beyond simple field substitution

- Understands detection logic, not just syntax conversion

3. **Agent Reasoning** - Multi-step intelligent decision making

- Analyzes rule intent before translation

- Selects appropriate YARA-L patterns (match conditions, time windows)

- Handles edge cases through iterative refinement

- Validates output structure automatically

**Why RAG beats simple translators:** Traditional tools do direct field mapping without understanding context. This RAG system retrieves relevant examples, understands detection patterns, and generates semantically correct rules that preserve the original security logic.

### 1.2 Quick Start Example

**Real-World Scenario:** Your SOC team needs to migrate a Splunk brute force detection rule to Google Chronicle.

**Input (SPL):**

```

index=windows EventCode=4625

| stats count by src_ip, user

| where count > 5

```

**What happens:**

1. **Agent analyzes** the SPL query structure

2. **Retrieves** UDM field mappings from knowledge base (EventCode 4625 → metadata.product_event_type)

3. **Finds** similar YARA-L brute force examples from rule library

4. **Converts** SPL fields to UDM format (src_ip → principal.ip, user → principal.user.userid)

5. **Generates** complete YARA-L rule with proper syntax

**Output (YARA-L):**

```

rule windows_brute_force_detection {

meta:

author = "EPMC-MDRS"

description = "Detect multiple failed login attempts from same source"

severity = "Medium"

events:

$event.metadata.event_type = "USER_LOGIN"

$event.metadata.product_event_type = "4625"

$event.principal.ip = $ip

$event.principal.user.userid = $user

$event.security_result.action = "BLOCK"

match:

$ip, $user over 15m

condition:

#event > 5

}

```

**Time:** 45 seconds (vs. 2-3 hours manual work)

### 1.3 Problem Statement

**Problem:** Migrating security rules between different SIEM systems requires significant time investment and deep understanding of each system's syntax.

**Business Impact:**

- **Manual conversion:** 2-4 hours per rule (expert SOC analyst required)

- **Error rate:** 30-40% due to syntax differences and field mapping complexity

- **Knowledge barrier:** Requires expertise in both source and target SIEM platforms

- **Testing challenge:** Difficult to validate translation accuracy without ground truth

- **Cost:** High labor costs for skilled security engineers

**Solution:** Automated conversion using:

- **AI Agent** powered by Azure OpenAI (GPT-4) with ReAct reasoning pattern

- **Knowledge Base** of YARA-L syntax, UDM fields, and rule examples

- **Vector Database** (ChromaDB) for intelligent retrieval of relevant documentation

- **Validation** through Google Chronicle API for rule correctness

- **Test Framework** (`run_tes.py`) for translation quality assurance with ground truth comparison

### 1.4 Business Value

**Time Savings:**

- **95% reduction** in conversion time: 2-4 hours → 5-10 minutes per rule

- **Test validation**: Automated comparison with ground truth rules via `run_tes.py`

- **Immediate productivity:** No need to train analysts on new SIEM syntax

**Quality Improvements:**

- **Consistent output:** Standardized YARA-L code structure

- **Lower error rate:** AI-assisted field mapping reduces mistakes

- **Built-in validation:** Chronicle API integration catches syntax errors

- **Knowledge preservation:** RAG system learns from previous conversions

- **Quality assurance:** Test framework validates translation accuracy

**Cost Reduction:**

- **Labor savings:** Reduces SOC analyst hours by 90%

- **Faster validation:** Automated testing replaces manual review

- **Scalability:** Process unlimited rules without additional headcount

- **Self-service:** Security teams can convert rules without vendor assistance

**Strategic Benefits:**

- **Platform independence:** Easily migrate between SIEM vendors

- **Multi-tenancy:** Support multiple clients with different rule libraries

- **Continuous improvement:** System learns from new rule examples

- **Compliance:** Maintain detection coverage during platform transitions

### 1.6 Scope and Use Cases

**Primary Use Case:** SPL/KQL → YARA-L 2.0 for Google Chronicle SecOps migration

**Additional Capabilities:**

- **Cross-platform translation:** Successfully tested for SPL → KQL conversions

- **Rule normalization:** Standardizes detection logic across different SIEM platforms

- **Knowledge transfer:** Helps security teams understand different query languages

**Target Audience:**

- SOC analysts migrating between SIEM platforms

- Security engineers managing multi-vendor environments

- MSSP teams supporting multiple client SIEM technologies

**Note While optimized for Google Chronicle SecOps, the agent-based architecture allows adaptation to other translation pairs through prompt engineering and knowledge base updates.

## 2. System Architecture

### 2.1 Web Application Mode (Interactive Translation)

```

┌─────────────────────────────────────────────────────────────┐

│ Web Interface (Django) │

│ index.html + views.py │

│ [KQL/SPL Language Selector Dropdown] │

└────────────────────────────┬────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────┐

│ RAG System Core │

│ ┌─────────────────────────────────────────────────────┐ │

│ │ LangChain ReAct Agent (rag_agent.py) │ │

│ │ - Max iterations: 10 │ │

│ │ - Timeout: 180 seconds │ │

│ │ - Azure OpenAI GPT-4 │ │

│ └──────────┬───────────────────────────────────────────┘ │

│ │ │

│ ▼ │

│ ┌─────────────────────────────────────────────────────┐ │

│ │ Agent Tools │ │

│ │ ┌──────────────────────────────────────────────┐ │ │

│ │ │ 1. security_document_retriever │ │ │

│ │ │ - ChromaDB vector store │ │ │

│ │ │ - YARA-L docs, UDM fields, Azure docs │ │ │

│ │ └──────────────────────────────────────────────┘ │ │

│ │ ┌──────────────────────────────────────────────┐ │ │

│ │ │ 2. github_rule_finder │ │ │

│ │ │ - Search for similar YARA-L rules │ │ │

│ │ └──────────────────────────────────────────────┘ │ │

│ │ ┌──────────────────────────────────────────────┐ │ │

│ │ │ 3. field_converter_tool │ │ │

│ │ │ - KQL/SPL → UDM mapping │ │ │

│ │ └──────────────────────────────────────────────┘ │ │

│ │ ┌──────────────────────────────────────────────┐ │ │

│ │ │ 4. google_rule_validator (optional) │ │ │

│ │ │ - Validation via Chronicle API │ │ │

│ │ └──────────────────────────────────────────────┘ │ │

│ └─────────────────────────────────────────────────────┘ │

└─────────────────────────────────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────┐

│ Data Storage & Knowledge Base │

│ ┌──────────────────┐ ┌────────────────┐ ┌─────────────┐ │

│ │ ChromaDB │ │ Config Files │ │ Datasets │ │

│ │ (Vector Store) │ │ (JSON) │ │ (Docs) │ │

│ └──────────────────┘ └────────────────┘ └─────────────┘ │

└─────────────────────────────────────────────────────────────┘

```

### 2.2 Test & Evaluation Mode (Translation Quality Testing)

```

┌─────────────────────────────────────────────────────────────┐

│ Test Script CLI (run_tes.py) │

│ │

│ Input: rag/app/tests/rules_static/kql/*.kql │

│ rag/app/tests/rules_static/spl/*.spl │

│ Ground truth: *.yara files │

│ Output: simple_output.xlsx + output_log.json │

│ │

│ ┌────────────────────────────────────────────────────┐ │

│ │ Test Framework (FromDir class) │ │

│ │ - Scans test directories for rule pairs │ │

│ │ - Finds matching rule + yara file combinations │ │

│ │ - Interactive: Prompts for KQL or SPL language │ │

│ └──────────────────────┬─────────────────────────────┘ │

└────────────────────────┬┼──────────────────────────────────┘

││

▼▼

┌─────────────────────────────────────────────────────────────┐

│ Test Processing Loop │

│ │

│ For each test pair: │

│ ┌──────────────────────────────────────────────────┐ │

│ │ 1. Load rule file content │ │

│ └──────────────┬───────────────────────────────────┘ │

│ ▼ │

│ ┌──────────────────────────────────────────────────┐ │

│ │ 2. Call RAG System │ │

│ │ - LangChain ReAct Agent │ │

│ │ - Vector retrieval from ChromaDB │ │

│ │ - Field mapping via dictionaries │ │

│ │ - Rule generation via GPT-4 │ │

│ └──────────────┬───────────────────────────────────┘ │

│ ▼ │

│ ┌──────────────────────────────────────────────────┐ │

│ │ 3. Extract output from agent response │ │

│ │ - response['output'] contains YARA-L rule │ │

│ └──────────────┬───────────────────────────────────┘ │

│ ▼ │

│ ┌──────────────────────────────────────────────────┐ │

│ │ 4. Load ground truth YARA-L from .yara file │ │

│ └──────────────┬───────────────────────────────────┘ │

│ ▼ │

│ ┌──────────────────────────────────────────────────┐ │

│ │ 5. Store comparison in results │ │

│ │ DataFrame: [rule_content, yara_agent_ │ │

│ │ translation, yara_correct_rule] │ │

│ └──────────────────────────────────────────────────┘ │

│ │

└────────────────────────────┬────────────────────────────────┘

│

▼

┌─────────────────────────────────────────────────────────────┐

│ Output Generation │

│ │

│ ┌──────────────────────┐ ┌───────────────────────────┐ │

│ │ Excel Spreadsheet │ │ JSON File │ │

│ │ (simple_output.xlsx)│ │ (output_log.json) │ │

│ │ │ │ │ │

│ │ Columns: │ │ Same data structure │ │

│ │ - rule_content │ │ for programmatic access │ │

│ │ - yara_agent_ │ │ │ │

│ │ translation │ │ │ │

│ │ - yara_correct_rule │ │ │ │

│ └──────────────────────┘ └───────────────────────────┘ │

│ │

│ Ready for: │

│ - Side-by-side comparison in Excel │

│ - Translation quality assessment │

│ - Test result documentation │

└─────────────────────────────────────────────────────────────┘

```

### 2.3 Technology Stack:

**Backend:**

- Django 5.2 - web framework

- LangChain 0.3 - AI agent orchestration

- Azure OpenAI GPT-4 - LLM

- ChromaDB - vector database

- Python 3.13

**Frontend:**

- HTML5/CSS3/JavaScript (Vanilla)

- AJAX for async requests

**AI/ML:**

- sentence-transformers - embeddings

- Azure OpenAI Embeddings

- ReAct Agent pattern

## 3. Installation & Setup

### 3.1 Requirements

- Python 3.10+

- Azure OpenAI API key

- 4GB RAM minimum

- 2GB free disk space

### 3.2 Installation

```

# 1. Clone the repository

git clone

cd adelya_rag

# 2. Create virtual environment

python3 -m venv .venv

source .venv/bin/activate # Linux/Mac

# or

.venv\Scripts\activate # Windows

# 3. Install dependencies

pip install -r rag/requirements.txt

pip install django

# 4. Configure environment variables

cp .env.example .env

# Edit .env file

```

### 3.3 .env File Configuration

```

# Azure OpenAI Configuration

AZURE_OPENAI_API_KEY=your-api-key-here

AZURE_OPENAI_ENDPOINT=https://ai-proxy.lab.epam.com

AZURE_OPENAI_API_VERSION=2024-02-01

# Deployment Names

AZURE_OPENAI_EMBEDDINGS_DEPLOYMENT_NAME=text-embedding-ada-002

AZURE_OPENAI_CHAT_DEPLOYMENT_NAME=gpt-4o

# Google Chronicle API (optional)

GOOGLE_API_FILE=path/to/google-credentials.json

```

### 3.4 Database Initialization

```

# Apply Django migrations

python manage.py migrate

# Create superuser (optional)

python manage.py createsuperuser

```

### 3.5 Running the Server

```

python manage.py runserver

# Server available at: http://127.0.0.1:8000/

```

## 4. Project Structure

```

adelya_rag/

├── core/ # Django project settings

│ ├── settings.py # Settings

│ ├── urls.py # URL routing

│ └── wsgi.py # WSGI entry point

│

├── rag_app/ # Django application

│ ├── views.py # API endpoints

│ ├── templates/

│ │ └── index.html # Web interface

│ └── utils/

│ └── rag_service.py # Service layer

│

├── rag/ # RAG System Core

│ └── app/

│ └── src/

│ └── mdrs/

│ ├── rag.py # Main RAG logic

│ ├── agent/

│ │ ├── rag_agent.py # ReAct agent

│ │ └── simple_agent.py # Simple agent

│ │

│ ├── configs/ # Configuration files

│ │ ├── dataset_configs.json

│ │ ├── udm_fields_mapping.json

│ │ └── spl_to_udm_fields_mapping.json

│ │

│ ├── datasets/ # Knowledge base

│ │ ├── azure-docs/ # Azure documentation

│ │ ├── kql-rules/ # KQL rule examples

│ │ ├── secops-docs/ # SecOps docs

│ │ ├── splunk-rules/ # Splunk rules

│ │ ├── udm-fields/ # UDM field docs

│ │ └── yaral-rules/ # YARA-L examples

│ │

│ ├── persist/ # ChromaDB storage

│ │ └── chroma.sqlite3

│ │

│ ├── preprompt/ # System prompts

│ │ ├── base_preprompt.py

│ │ ├── kql_preprompt.py

│ │ └── spl_preprompt.py

│ │

│ ├── retrieval/ # RAG components

│ │ ├── loader.py # Document loading

│ │ ├── split.py # Text splitting

│ │ ├── search.py # Vector search

│ │ ├── retriever.py # Main retriever

│ │ └── annotate.py # Document annotation

│ │

│ └── tool/ # Agent tools

│ ├── search_tool.py

│ ├── field_converter_tool.py

│ ├── github_rule_finder.py

│ ├── google_validator.py

│ └── udm_web_search_tool.py

│

├── prompts/ # Prompt templates

│ ├── chronicle.md

│ └── rules_templating.txt

│

├── scripts/ # Utility scripts

│ ├── install_bindplane.sh

│ └── install_bindplane.ps1

│

├── manage.py # Django management

├── requirements.txt # Python dependencies

└── README.md # This file

```

## 5. How It Works

### 5.1 Overall Workflow

```

User Input (KQL/SPL Rule)

│

▼

┌───────────────────────┐

│ Django View Handler │

│ (views.py) │

└──────────┬────────────┘

│

▼

┌───────────────────────┐

│ get_agent_response() │

│ (rag.py) │

└──────────┬────────────┘

│

▼

┌───────────────────────────────────────┐

│ ReAct Agent Execution Loop │

│ ┌─────────────────────────────────┐ │

│ │ Iteration 1-10 │ │

│ │ ┌──────────────────────────┐ │ │

│ │ │ Thought: Analyze input │ │ │

│ │ └──────────────────────────┘ │ │

│ │ ┌──────────────────────────┐ │ │

│ │ │ Action: Use tool │ │ │

│ │ └──────────────────────────┘ │ │

│ │ ┌──────────────────────────┐ │ │

│ │ │ Observation: Tool result │ │ │

│ │ └──────────────────────────┘ │ │

│ └─────────────────────────────────┘ │

└───────────────┬───────────────────────┘

│

▼

┌──────────────┐

│ Final Answer │

│ (YARA-L Rule)│

└──────┬───────┘

│

▼

┌──────────────┐

│ Response │

│ to User │

└──────────────┘

```

### 5.2 Detailed Conversion Process

#### Step 1: Receiving Input Data

```

# views.py

def render_index(request):

if request.method == 'POST':

rule_lang = request.POST.get('from') # 'kql' or 'spl'

query_text = request.POST.get('query_text') # Rule content

```

#### Step 2: Agent Initialization

```

# rag.py

def get_agent_response(rule_lang: str, user_input: str):

agent = create_agent_with_tools(rule_lang)

# agent contains:

# - LLM (Azure OpenAI GPT-4)

# - Tools (retriever, github finder, etc.)

# - Prompt template (kql_template or spl_template)

```

#### Step 3: ReAct Agent Loop

Agent executes the Thought → Action → Observation cycle:

**Iteration 1:**

```

Thought: I need to understand the KQL rule structure

Action: security_document_retriever

Action Input: "KQL EventID field mapping to UDM"

Observation: [Retrieved documentation about UDM fields]

```

**Iteration 2:**

```

Thought: I need a template for YARA-L structure

Action: github_rule_finder

Action Input: "authentication failure detection"

Observation: [Found similar YARA-L rule from GitHub]

```

**Iteration 3:**

```

Thought: I need to map KQL fields to UDM

Action: KQLtoUDMFieldsConverterTool

Action Input: {"EventID": "4625", "LogonType": "10"}

Observation: [Mapped fields to UDM paths]

```

**Final Iteration:**

```

Thought: I have all information to generate the rule

Final Answer: [Complete YARA-L rule code]

```

#### Step 4: Returning Results

```

# views.py

return JsonResponse({

'status': 'success',

'translated_text': final_rule_code,

'debug_text': debug_information

})

```

## 6. System Components

### 6.1 RAG Agent (rag_agent.py)

**Purpose:** AI agent orchestration with tool access

**Key Parameters:**

```

agent_executor = AgentExecutor(

agent=agent,

tools=tools,

verbose=True,

max_iterations=10, # Maximum 10 cycles

max_execution_time=180, # 3 minute timeout

handle_parsing_errors=True,

return_intermediate_steps=True,

early_stopping_method="generate"

)

```

**How it works:**

1. Receives prompt with instructions

2. Analyzes incoming rule

3. Selects appropriate tools

4. Iteratively collects information

5. Generates final YARA-L rule

### 6.2 Security Document Retriever

**Purpose:** Search for relevant documentation via vector search

**Data Sources:**

- YARA-L syntax documentation

- UDM field descriptions

- Azure SecOps documentation

- Rule examples

**Process:**

```

# 1. Query embedding

query_embedding = embeddings.embed_query("UDM field for event type")

# 2. Search in ChromaDB

results = vectorstore.similarity_search(

query_embedding,

k=5 # Top 5 results

)

# 3. Return documents

return [doc.page_content for doc in results]

```

### 6.3 GitHub Rule Finder

**Purpose:** Find similar YARA-L rules to use as templates

**Process:**

1. Analyze incoming rule (detection type, fields)

2. Search in local YARA-L rules database

3. Rank by similarity score

4. Return most similar rule

### 6.4 Field Converter Tool

**Purpose:** Map fields from KQL/SPL to UDM format

**Mapping Examples:**

**KQL → UDM:**

```

{

"EventID": "metadata.product_event_type",

"Account": "principal.user.userid",

"IpAddress": "principal.ip",

"Computer": "principal.hostname"

}

```

**SPL → UDM:**

```

{

"src": "src.ip",

"dest": "target.ip",

"user": "principal.user.userid",

"signature": "metadata.product_event_type"

}

```

### 6.5 Google Rule Validator (Optional)

**Purpose:** Validate YARA-L rules via Chronicle API

**Requirements:**

- Google service account JSON

- Access to Chronicle API

**Process:**

```

# 1. Send rule for validation

response = chronicle_api.validate_rule(rule_content)

# 2. Check response

if response.valid:

return "Rule is valid"

else:

return f"Errors: {response.errors}"

```

### 6.6 Prompt Templates

**base_preprompt.py:**

- General agent instructions

- Workflow guidelines

- Tool usage rules

**kql_preprompt.py:**

- KQL-specific instructions

- KQL → YARA-L conversion examples

- UDM field mapping for KQL

**spl_preprompt.py:**

- SPL-specific instructions

- SPL → YARA-L conversion examples

- UDM field mapping for Splunk

### 6.7 Vector Store (ChromaDB)

**Structure:**

```

persist/

├── chroma.sqlite3 # SQLite database

└── [collection-id]/ # Vectors and metadata

├── data_level0.bin # HNSW index

└── length.bin # Document lengths

```

**Collections:**

- `azure-docs` - Azure documentation

- `secops-docs` - Chronicle SecOps docs

- `udm-fields` - UDM field descriptions

- `yaral-rules` - Example YARA-L rules

## 7. Usage

### 7.1 Web Interface

1. Open http://127.0.0.1:8000/

2. Select source language (KQL or SPL)

3. Paste rule into text field

4. Click "Translate"

5. Get YARA-L rule in output

### 7.2 Conversion Example

**Input KQL Rule:**

```

SecurityEvent

| where EventID == 4625

| where LogonType == 10

| summarize FailedAttempts = count() by Account, Computer

| where FailedAttempts > 5

```

**Output YARA-L Rule:**

```

rule rdp_brute_force_detection {

meta:

author = "E"

description = "Detect RDP brute force attempts"

severity = "High"

events:

$fail.metadata.event_type = "USER_LOGIN"

$fail.metadata.product_event_type = "4625"

$fail.target.user.userid = $user

$fail.principal.hostname = $hostname

$fail.security_result.action = "BLOCK"

match:

$user, $hostname over 15m

condition:

#fail > 5

}

```

### 7.3 Test & Evaluation Mode (Translation Quality Testing)

**Purpose:** Test translation quality by comparing agent output with ground truth YARA-L rules.

**Script:** `run_tes.py`

**Prerequisites:**

- Configured `.env` file with Azure OpenAI credentials

- Python packages: `pandas`, `openpyxl`

**Test Data Structure:**

```

rag/app/tests/rules_static/

├── kql/

│ ├── test_case_1/

│ │ ├── rule.kql # Input KQL rule

│ │ └── rule.yara # Ground truth YARA-L

│ └── test_case_2/

│ ├── rule.kql

│ └── rule.yara

└── spl/

├── test_case_1/

│ ├── rule.spl # Input SPL rule

│ └── rule.yara # Ground truth YARA-L

└── test_case_2/

├── rule.spl

└── rule.yara

```

**Usage:**

```

# Activate virtual environment

source .venv/bin/activate

# Run as Python module (recommended)

.venv/bin/python -m rag.app.src.mdrs.run_tes

# Interactive prompt will ask:

# Enter rule language: KQL or SPL:

```

**Process:**

1. Prompts user to select rule language (KQL or SPL)

2. Scans corresponding test directory for rule/yara pairs

3. For each test case:

- Loads input rule content

- Calls agent for translation

- Extracts translated YARA-L from `response['output']`

- Loads ground truth YARA-L from `.yara` file

- Stores all three for comparison

4. Saves results to Excel and JSON

**Output Structure:**

**Excel file: `simple_output.xlsx`**

| Column | Description |

|--------|-------------|

| `rule_content` | Original KQL/SPL rule text |

| `yara_agent_translation` | Agent-generated YARA-L rule |

| `yara_correct_rule` | Ground truth YARA-L from test file |

**JSON file: `output_log.json`**

- Same structure as Excel

- Suitable for programmatic analysis

- Preserves formatting for diff tools

**Example Output:**

```

$ .venv/bin/python -m rag.app.src.mdrs.run_tes

Enter rule language: KQL or SPL: KQL

Processing KQL rule: rule.kql

OfficeActivity

| where RecordType =~ "SharePointFileOperation"

| where Operation =~ "FileUploaded"

...

> Entering new AgentExecutor chain...

[Agent thinking and tool usage...]

> Finished chain.

Results saved to:

- simple_output.xlsx

- output_log.json

```

**Use Cases:**

- **Quality Assurance**: Compare agent translations with expert-written rules

- **Regression Testing**: Verify improvements don't break existing translations

- **Evaluation**: Measure translation accuracy and identify edge cases

- **Documentation**: Create test reports for stakeholders

**Performance:**

- Average: 30-60 seconds per test case

- Depends on agent complexity and number of tool calls

- Single rule tested per run (breaks after first test pair)

### 7.4 API Usage (Programmatic)

```

from rag.app.src.mdrs.rag import get_agent_response

# Convert KQL rule

result = get_agent_response(

rule_lang='kql',

user_input='SecurityEvent | where EventID == 4625'

)

# Result

yaral_rule = result['output']

print(yaral_rule)

```

## 8. Troubleshooting

### 8.1 Issue: Infinite Agent Loop

**Symptoms:**

- Agent executes more than 10 iterations

- Doesn't return final result

- Timeout after 180 seconds

**Solution:**

1. Check `max_iterations` in `rag_agent.py`:

```

max_iterations=10 # Should be 10 or less

```

2. Simplify prompt (remove "MUST ALWAYS" instructions)

3. Check logs:

```

# Django console will show Thought/Action/Observation

```

### 8.2 Issue: "[object Object] undefined"

**Symptoms:**

- Web interface displays "[object Object]"

- No readable rule text

**Solution:**

Check `views.py` - should extract `output`:

```

if isinstance(answer, dict):

translated_text = answer.get('output', str(answer))

```

标签:AI RAG, GitHub 规则查找, Golden Template, SPL KQL 转 YARA-L, Token 消耗监控, 上下文检索, 代码仅输出模式, 企业级稳定性, 安全运营中心 SOC, 提示词工程, 搜索引擎优化 SEO, 数据可视化, 策略决策点, 自动化翻译, 规则翻译, 语法合规检查, 跨平台 SIEM 规则翻译, 逆向工具