Shad0wMazt3r/The-Scaffolding

GitHub: Shad0wMazt3r/The-Scaffolding

一个面向代理的自动化进攻编排框架,解决 CTF 与漏洞赏金研究中的上下文保持与噪声干扰问题。

Stars: 0 | Forks: 0

# 脚手架 - 漏洞赏金与 CTF 框架

## 与代理无关的进攻编排

The Scaffolding is an open framework for autonomously solving CTF challenges and conducting bug bounty research. It bridges high-level security reasoning with deterministic execution by combining the Model Context Protocol (MCP) with a persistent, self-improving agent knowledge base.

**Plug it into any AI agent: Claude Code, GitHub Copilot, Codex, Gemini, OpenCode, and others, with minimal configuration.**

## 功能说明

Most AI agents fail at offensive security tasks because they lack structured recon, get overwhelmed by noise, and lose context across long sessions. The Scaffolding solves this by providing:

- **Structured skill loading**: agents load only the skills relevant to the current challenge type (web, pwn, crypto, etc.), keeping context clean

- **LatticeMind integration**: automated scanning with confidence-scored findings, so the agent reasons over signal not noise

- **Kali MCP integration**: direct access to standard security tooling without manual setup

- **Persistent notes**: session state is externalized, preventing context rot on long solves

- **Self-improving documentation**: solve outcomes feed back into skill files, making the harness better over time

## 演示

The following is a demo of an agent using The Scaffolding to solve a hard web challenge.

https://github.com/user-attachments/assets/5774267e-0e5c-414b-99d3-1b9de2bc0444

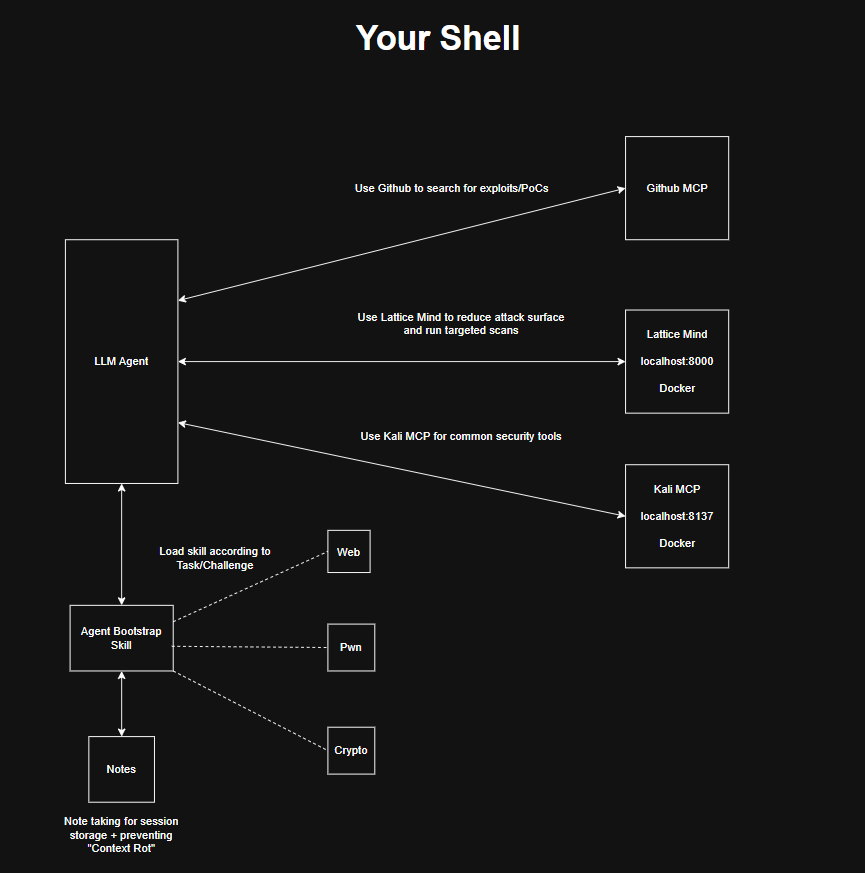

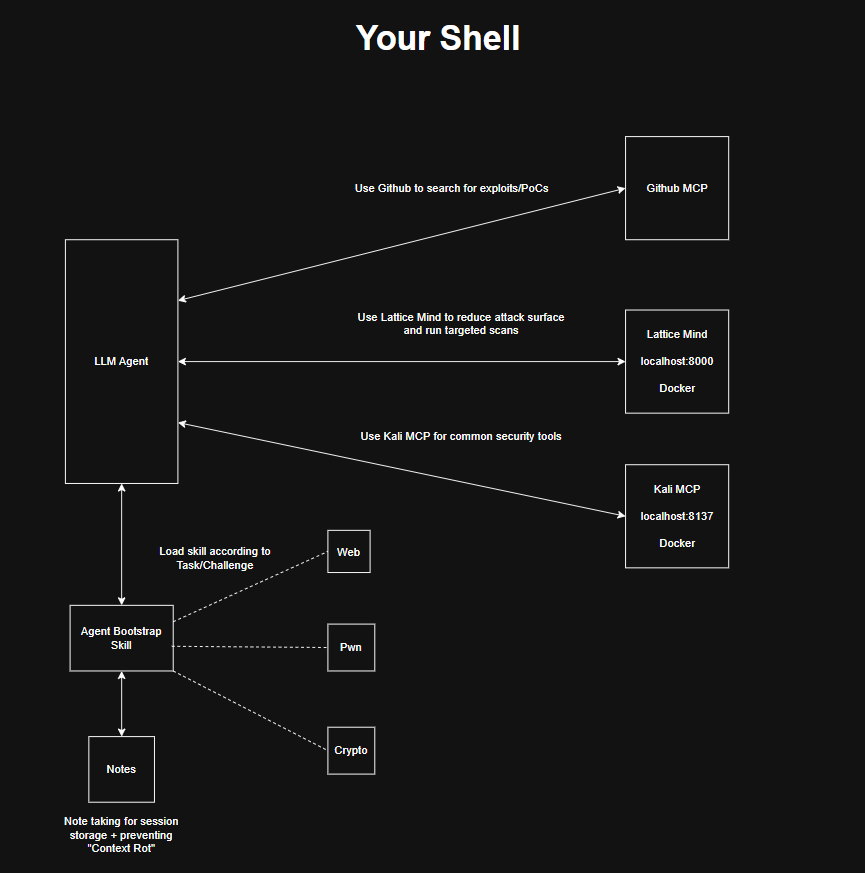

## 架构

The agent sits at the center of three tool layers:

- **LatticeMind** — runs targeted scans, scores findings by confidence, surfaces candidates for the agent to reason through

- **Kali MCP** — executes standard security tools on demand

- **GitHub MCP** — searches for exploits, PoCs, and reference implementations

The bootstrap skill loads domain-specific knowledge at session start. Notes persist session state and feed back into skills after each solve.

## 快速开始

**Prerequisites**

- Docker

- An AI agent with MCP support

**Setup**

1. Clone this repository

2. Set up [LatticeMind](https://github.com/Shad0wMazt3r/Lattice-Mind) and run it on Docker

3. Run the MCP server: `docker compose up --profile=dev`

4. Add the MCP server to your agent's configuration

5. Set up [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) on a separate port (recommended: `8137`)

6. Start your agent and load the `bug-hunt-framework` instructions

## 项目结构

```

(root)/

├── .agents/ # Agent skill definitions

├── .cursor/ # Cursor IDE configuration

├── .gemini/ # Gemini CLI configuration

├── .github/ # GitHub integration

├── .opencode/ # OpenCode agent configuration

├── kali-mcp/ # Kali Linux MCP server integration

├── AGENTS.md # Shared agent instructions

├── HUMAN.md # Human-facing documentation

└── README.md

```

## 技能

Domain-specific skill files are loaded by the agent based on challenge type. Each skill encodes recon patterns, attack chains, and lessons learned from past solves.

| Skill | Coverage |

|---|---|

| `agent-setup` | Tool readiness and dependency checks |

| `agent-calibration` | Workflow optimization and feedback |

| `crypto` | Encoding, RSA, elliptic curve, hash attacks |

| `forensics` | File, memory, network, and multimedia analysis |

| `mobile` | Mobile application security testing |

| `network` | Reconnaissance and network assessment |

| `pwn` | Stack, heap, and format string exploitation |

| `recon` | Target discovery and reconnaissance |

| `reverse-engineering` | Binary analysis and RE |

| `web` | Web application security testing |

## 技能契约与基线指标

To keep skill quality consistent across agents, this repository includes:

- Canonical contract: `.agents/standards/skill-contract.yaml`

- Machine-readable contract: `.agents/standards/skill-contract.json`

- Quality gate config: `.agents/standards/quality-gate.json`

- Router single source of truth: `.agents/standards/router-spec.json`

- Reproducible baseline generator: `tools/skills/baseline_metrics.py`

- Validator: `tools/skills/validate_skills.py`

- Normalizer: `tools/skills/normalize_skills.py`

- Router generator: `tools/skills/generate_router_wrappers.py`

- Smoke eval gate: `tools/skills/smoke_eval.py`

- Current baseline snapshot: `reports/skill-baseline.json`

Common commands:

```

# 生成基线

python tools/skills/baseline_metrics.py --output reports/skill-baseline.json

# 规范化已知 Markdown 问题

python tools/skills/normalize_skills.py --write --fix-footnotes

# 检查规范化偏差(CI 安全)

python tools/skills/normalize_skills.py --check --fix-footnotes

# 根据契约验证

python tools/skills/validate_skills.py --report reports/skill-validation.json

# 从单一规范重新生成所有路由器包装器

python tools/skills/generate_router_wrappers.py

# 运行端到端质量门禁

python tools/skills/smoke_eval.py --output reports/skill-smoke-eval.json

```

CI enforcement lives in `.github/workflows/skills-quality.yml`.

### 低成本 Cursor 自动基准测试(<=15 个任务)

Use the phase-routing benchmark to sanity-check real agent behavior at low cost:

```

python tools/benchmarks/run_cursor_phase_benchmark.py ^

--tasks tools/benchmarks/cursor_phase_tasks.json ^

--max-tasks 10 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-auto-phase-benchmark.json

```

Task set file: `tools/benchmarks/cursor_phase_tasks.json` (10 tasks by default; hard max 15).

### 安全基准测试(目前仅限技能,未来对比 MCP)

Run the richer security benchmark with skills-only profile first:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-skills-only.json

```

Later, after enabling Kali/Lattice MCP, run:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-mcp-enabled.json

```

Then compare:

```

python tools/benchmarks/compare_cursor_benchmarks.py ^

--baseline reports/benchmarks/cursor-security-skills-only.json ^

--candidate reports/benchmarks/cursor-security-mcp-enabled.json ^

--output reports/benchmarks/cursor-security-comparison.json

```

### 漏洞发现基准测试(真实质量)

Run hard-but-low-cost vuln-finding benchmark (<=15 tasks) with strict JSON findings and TP/FP/FN scoring:

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile control ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-control.json

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-skills-only.json

```

Later (after enabling MCPs):

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-mcp-enabled.json

```

Compare two vuln runs:

```

python tools/benchmarks/compare_cursor_vuln_benchmarks.py ^

--baseline reports/benchmarks/cursor-vuln-control.json ^

--candidate reports/benchmarks/cursor-vuln-skills-only.json ^

--output reports/benchmarks/cursor-vuln-control-vs-skills.json

```

## 设计理念

The Scaffolding is token-efficient by design. Skills are loaded selectively, notes externalize state rather than consuming context, and LatticeMind's confidence scoring prevents the agent from reasoning over irrelevant findings. It runs fully locally or on cloud infrastructure depending on your setup.

## 负责任使用

This project is intended for security research and education. Only use it against systems you are authorized to test.

### 致谢

- [k3nn3dy-ai](https://github.com/k3nn3dy-ai) for [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) —

an essential part of this harness that provides direct access to Kali

Linux security tooling through the Model Context Protocol.

The agent sits at the center of three tool layers:

- **LatticeMind** — runs targeted scans, scores findings by confidence, surfaces candidates for the agent to reason through

- **Kali MCP** — executes standard security tools on demand

- **GitHub MCP** — searches for exploits, PoCs, and reference implementations

The bootstrap skill loads domain-specific knowledge at session start. Notes persist session state and feed back into skills after each solve.

## 快速开始

**Prerequisites**

- Docker

- An AI agent with MCP support

**Setup**

1. Clone this repository

2. Set up [LatticeMind](https://github.com/Shad0wMazt3r/Lattice-Mind) and run it on Docker

3. Run the MCP server: `docker compose up --profile=dev`

4. Add the MCP server to your agent's configuration

5. Set up [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) on a separate port (recommended: `8137`)

6. Start your agent and load the `bug-hunt-framework` instructions

## 项目结构

```

(root)/

├── .agents/ # Agent skill definitions

├── .cursor/ # Cursor IDE configuration

├── .gemini/ # Gemini CLI configuration

├── .github/ # GitHub integration

├── .opencode/ # OpenCode agent configuration

├── kali-mcp/ # Kali Linux MCP server integration

├── AGENTS.md # Shared agent instructions

├── HUMAN.md # Human-facing documentation

└── README.md

```

## 技能

Domain-specific skill files are loaded by the agent based on challenge type. Each skill encodes recon patterns, attack chains, and lessons learned from past solves.

| Skill | Coverage |

|---|---|

| `agent-setup` | Tool readiness and dependency checks |

| `agent-calibration` | Workflow optimization and feedback |

| `crypto` | Encoding, RSA, elliptic curve, hash attacks |

| `forensics` | File, memory, network, and multimedia analysis |

| `mobile` | Mobile application security testing |

| `network` | Reconnaissance and network assessment |

| `pwn` | Stack, heap, and format string exploitation |

| `recon` | Target discovery and reconnaissance |

| `reverse-engineering` | Binary analysis and RE |

| `web` | Web application security testing |

## 技能契约与基线指标

To keep skill quality consistent across agents, this repository includes:

- Canonical contract: `.agents/standards/skill-contract.yaml`

- Machine-readable contract: `.agents/standards/skill-contract.json`

- Quality gate config: `.agents/standards/quality-gate.json`

- Router single source of truth: `.agents/standards/router-spec.json`

- Reproducible baseline generator: `tools/skills/baseline_metrics.py`

- Validator: `tools/skills/validate_skills.py`

- Normalizer: `tools/skills/normalize_skills.py`

- Router generator: `tools/skills/generate_router_wrappers.py`

- Smoke eval gate: `tools/skills/smoke_eval.py`

- Current baseline snapshot: `reports/skill-baseline.json`

Common commands:

```

# 生成基线

python tools/skills/baseline_metrics.py --output reports/skill-baseline.json

# 规范化已知 Markdown 问题

python tools/skills/normalize_skills.py --write --fix-footnotes

# 检查规范化偏差(CI 安全)

python tools/skills/normalize_skills.py --check --fix-footnotes

# 根据契约验证

python tools/skills/validate_skills.py --report reports/skill-validation.json

# 从单一规范重新生成所有路由器包装器

python tools/skills/generate_router_wrappers.py

# 运行端到端质量门禁

python tools/skills/smoke_eval.py --output reports/skill-smoke-eval.json

```

CI enforcement lives in `.github/workflows/skills-quality.yml`.

### 低成本 Cursor 自动基准测试(<=15 个任务)

Use the phase-routing benchmark to sanity-check real agent behavior at low cost:

```

python tools/benchmarks/run_cursor_phase_benchmark.py ^

--tasks tools/benchmarks/cursor_phase_tasks.json ^

--max-tasks 10 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-auto-phase-benchmark.json

```

Task set file: `tools/benchmarks/cursor_phase_tasks.json` (10 tasks by default; hard max 15).

### 安全基准测试(目前仅限技能,未来对比 MCP)

Run the richer security benchmark with skills-only profile first:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-skills-only.json

```

Later, after enabling Kali/Lattice MCP, run:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-mcp-enabled.json

```

Then compare:

```

python tools/benchmarks/compare_cursor_benchmarks.py ^

--baseline reports/benchmarks/cursor-security-skills-only.json ^

--candidate reports/benchmarks/cursor-security-mcp-enabled.json ^

--output reports/benchmarks/cursor-security-comparison.json

```

### 漏洞发现基准测试(真实质量)

Run hard-but-low-cost vuln-finding benchmark (<=15 tasks) with strict JSON findings and TP/FP/FN scoring:

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile control ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-control.json

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-skills-only.json

```

Later (after enabling MCPs):

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-mcp-enabled.json

```

Compare two vuln runs:

```

python tools/benchmarks/compare_cursor_vuln_benchmarks.py ^

--baseline reports/benchmarks/cursor-vuln-control.json ^

--candidate reports/benchmarks/cursor-vuln-skills-only.json ^

--output reports/benchmarks/cursor-vuln-control-vs-skills.json

```

## 设计理念

The Scaffolding is token-efficient by design. Skills are loaded selectively, notes externalize state rather than consuming context, and LatticeMind's confidence scoring prevents the agent from reasoning over irrelevant findings. It runs fully locally or on cloud infrastructure depending on your setup.

## 负责任使用

This project is intended for security research and education. Only use it against systems you are authorized to test.

### 致谢

- [k3nn3dy-ai](https://github.com/k3nn3dy-ai) for [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) —

an essential part of this harness that provides direct access to Kali

Linux security tooling through the Model Context Protocol.

The agent sits at the center of three tool layers:

- **LatticeMind** — runs targeted scans, scores findings by confidence, surfaces candidates for the agent to reason through

- **Kali MCP** — executes standard security tools on demand

- **GitHub MCP** — searches for exploits, PoCs, and reference implementations

The bootstrap skill loads domain-specific knowledge at session start. Notes persist session state and feed back into skills after each solve.

## 快速开始

**Prerequisites**

- Docker

- An AI agent with MCP support

**Setup**

1. Clone this repository

2. Set up [LatticeMind](https://github.com/Shad0wMazt3r/Lattice-Mind) and run it on Docker

3. Run the MCP server: `docker compose up --profile=dev`

4. Add the MCP server to your agent's configuration

5. Set up [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) on a separate port (recommended: `8137`)

6. Start your agent and load the `bug-hunt-framework` instructions

## 项目结构

```

(root)/

├── .agents/ # Agent skill definitions

├── .cursor/ # Cursor IDE configuration

├── .gemini/ # Gemini CLI configuration

├── .github/ # GitHub integration

├── .opencode/ # OpenCode agent configuration

├── kali-mcp/ # Kali Linux MCP server integration

├── AGENTS.md # Shared agent instructions

├── HUMAN.md # Human-facing documentation

└── README.md

```

## 技能

Domain-specific skill files are loaded by the agent based on challenge type. Each skill encodes recon patterns, attack chains, and lessons learned from past solves.

| Skill | Coverage |

|---|---|

| `agent-setup` | Tool readiness and dependency checks |

| `agent-calibration` | Workflow optimization and feedback |

| `crypto` | Encoding, RSA, elliptic curve, hash attacks |

| `forensics` | File, memory, network, and multimedia analysis |

| `mobile` | Mobile application security testing |

| `network` | Reconnaissance and network assessment |

| `pwn` | Stack, heap, and format string exploitation |

| `recon` | Target discovery and reconnaissance |

| `reverse-engineering` | Binary analysis and RE |

| `web` | Web application security testing |

## 技能契约与基线指标

To keep skill quality consistent across agents, this repository includes:

- Canonical contract: `.agents/standards/skill-contract.yaml`

- Machine-readable contract: `.agents/standards/skill-contract.json`

- Quality gate config: `.agents/standards/quality-gate.json`

- Router single source of truth: `.agents/standards/router-spec.json`

- Reproducible baseline generator: `tools/skills/baseline_metrics.py`

- Validator: `tools/skills/validate_skills.py`

- Normalizer: `tools/skills/normalize_skills.py`

- Router generator: `tools/skills/generate_router_wrappers.py`

- Smoke eval gate: `tools/skills/smoke_eval.py`

- Current baseline snapshot: `reports/skill-baseline.json`

Common commands:

```

# 生成基线

python tools/skills/baseline_metrics.py --output reports/skill-baseline.json

# 规范化已知 Markdown 问题

python tools/skills/normalize_skills.py --write --fix-footnotes

# 检查规范化偏差(CI 安全)

python tools/skills/normalize_skills.py --check --fix-footnotes

# 根据契约验证

python tools/skills/validate_skills.py --report reports/skill-validation.json

# 从单一规范重新生成所有路由器包装器

python tools/skills/generate_router_wrappers.py

# 运行端到端质量门禁

python tools/skills/smoke_eval.py --output reports/skill-smoke-eval.json

```

CI enforcement lives in `.github/workflows/skills-quality.yml`.

### 低成本 Cursor 自动基准测试(<=15 个任务)

Use the phase-routing benchmark to sanity-check real agent behavior at low cost:

```

python tools/benchmarks/run_cursor_phase_benchmark.py ^

--tasks tools/benchmarks/cursor_phase_tasks.json ^

--max-tasks 10 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-auto-phase-benchmark.json

```

Task set file: `tools/benchmarks/cursor_phase_tasks.json` (10 tasks by default; hard max 15).

### 安全基准测试(目前仅限技能,未来对比 MCP)

Run the richer security benchmark with skills-only profile first:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-skills-only.json

```

Later, after enabling Kali/Lattice MCP, run:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-mcp-enabled.json

```

Then compare:

```

python tools/benchmarks/compare_cursor_benchmarks.py ^

--baseline reports/benchmarks/cursor-security-skills-only.json ^

--candidate reports/benchmarks/cursor-security-mcp-enabled.json ^

--output reports/benchmarks/cursor-security-comparison.json

```

### 漏洞发现基准测试(真实质量)

Run hard-but-low-cost vuln-finding benchmark (<=15 tasks) with strict JSON findings and TP/FP/FN scoring:

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile control ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-control.json

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-skills-only.json

```

Later (after enabling MCPs):

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-mcp-enabled.json

```

Compare two vuln runs:

```

python tools/benchmarks/compare_cursor_vuln_benchmarks.py ^

--baseline reports/benchmarks/cursor-vuln-control.json ^

--candidate reports/benchmarks/cursor-vuln-skills-only.json ^

--output reports/benchmarks/cursor-vuln-control-vs-skills.json

```

## 设计理念

The Scaffolding is token-efficient by design. Skills are loaded selectively, notes externalize state rather than consuming context, and LatticeMind's confidence scoring prevents the agent from reasoning over irrelevant findings. It runs fully locally or on cloud infrastructure depending on your setup.

## 负责任使用

This project is intended for security research and education. Only use it against systems you are authorized to test.

### 致谢

- [k3nn3dy-ai](https://github.com/k3nn3dy-ai) for [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) —

an essential part of this harness that provides direct access to Kali

Linux security tooling through the Model Context Protocol.

The agent sits at the center of three tool layers:

- **LatticeMind** — runs targeted scans, scores findings by confidence, surfaces candidates for the agent to reason through

- **Kali MCP** — executes standard security tools on demand

- **GitHub MCP** — searches for exploits, PoCs, and reference implementations

The bootstrap skill loads domain-specific knowledge at session start. Notes persist session state and feed back into skills after each solve.

## 快速开始

**Prerequisites**

- Docker

- An AI agent with MCP support

**Setup**

1. Clone this repository

2. Set up [LatticeMind](https://github.com/Shad0wMazt3r/Lattice-Mind) and run it on Docker

3. Run the MCP server: `docker compose up --profile=dev`

4. Add the MCP server to your agent's configuration

5. Set up [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) on a separate port (recommended: `8137`)

6. Start your agent and load the `bug-hunt-framework` instructions

## 项目结构

```

(root)/

├── .agents/ # Agent skill definitions

├── .cursor/ # Cursor IDE configuration

├── .gemini/ # Gemini CLI configuration

├── .github/ # GitHub integration

├── .opencode/ # OpenCode agent configuration

├── kali-mcp/ # Kali Linux MCP server integration

├── AGENTS.md # Shared agent instructions

├── HUMAN.md # Human-facing documentation

└── README.md

```

## 技能

Domain-specific skill files are loaded by the agent based on challenge type. Each skill encodes recon patterns, attack chains, and lessons learned from past solves.

| Skill | Coverage |

|---|---|

| `agent-setup` | Tool readiness and dependency checks |

| `agent-calibration` | Workflow optimization and feedback |

| `crypto` | Encoding, RSA, elliptic curve, hash attacks |

| `forensics` | File, memory, network, and multimedia analysis |

| `mobile` | Mobile application security testing |

| `network` | Reconnaissance and network assessment |

| `pwn` | Stack, heap, and format string exploitation |

| `recon` | Target discovery and reconnaissance |

| `reverse-engineering` | Binary analysis and RE |

| `web` | Web application security testing |

## 技能契约与基线指标

To keep skill quality consistent across agents, this repository includes:

- Canonical contract: `.agents/standards/skill-contract.yaml`

- Machine-readable contract: `.agents/standards/skill-contract.json`

- Quality gate config: `.agents/standards/quality-gate.json`

- Router single source of truth: `.agents/standards/router-spec.json`

- Reproducible baseline generator: `tools/skills/baseline_metrics.py`

- Validator: `tools/skills/validate_skills.py`

- Normalizer: `tools/skills/normalize_skills.py`

- Router generator: `tools/skills/generate_router_wrappers.py`

- Smoke eval gate: `tools/skills/smoke_eval.py`

- Current baseline snapshot: `reports/skill-baseline.json`

Common commands:

```

# 生成基线

python tools/skills/baseline_metrics.py --output reports/skill-baseline.json

# 规范化已知 Markdown 问题

python tools/skills/normalize_skills.py --write --fix-footnotes

# 检查规范化偏差(CI 安全)

python tools/skills/normalize_skills.py --check --fix-footnotes

# 根据契约验证

python tools/skills/validate_skills.py --report reports/skill-validation.json

# 从单一规范重新生成所有路由器包装器

python tools/skills/generate_router_wrappers.py

# 运行端到端质量门禁

python tools/skills/smoke_eval.py --output reports/skill-smoke-eval.json

```

CI enforcement lives in `.github/workflows/skills-quality.yml`.

### 低成本 Cursor 自动基准测试(<=15 个任务)

Use the phase-routing benchmark to sanity-check real agent behavior at low cost:

```

python tools/benchmarks/run_cursor_phase_benchmark.py ^

--tasks tools/benchmarks/cursor_phase_tasks.json ^

--max-tasks 10 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-auto-phase-benchmark.json

```

Task set file: `tools/benchmarks/cursor_phase_tasks.json` (10 tasks by default; hard max 15).

### 安全基准测试(目前仅限技能,未来对比 MCP)

Run the richer security benchmark with skills-only profile first:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-skills-only.json

```

Later, after enabling Kali/Lattice MCP, run:

```

python tools/benchmarks/run_cursor_security_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_security_tasks.json ^

--max-tasks 12 ^

--timeout-sec 20 ^

--output reports/benchmarks/cursor-security-mcp-enabled.json

```

Then compare:

```

python tools/benchmarks/compare_cursor_benchmarks.py ^

--baseline reports/benchmarks/cursor-security-skills-only.json ^

--candidate reports/benchmarks/cursor-security-mcp-enabled.json ^

--output reports/benchmarks/cursor-security-comparison.json

```

### 漏洞发现基准测试(真实质量)

Run hard-but-low-cost vuln-finding benchmark (<=15 tasks) with strict JSON findings and TP/FP/FN scoring:

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile control ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-control.json

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile skills-only ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-skills-only.json

```

Later (after enabling MCPs):

```

python tools/benchmarks/run_cursor_vuln_benchmark.py ^

--profile mcp-enabled ^

--tasks tools/benchmarks/cursor_vuln_tasks.json ^

--max-tasks 12 ^

--timeout-sec 25 ^

--output reports/benchmarks/cursor-vuln-mcp-enabled.json

```

Compare two vuln runs:

```

python tools/benchmarks/compare_cursor_vuln_benchmarks.py ^

--baseline reports/benchmarks/cursor-vuln-control.json ^

--candidate reports/benchmarks/cursor-vuln-skills-only.json ^

--output reports/benchmarks/cursor-vuln-control-vs-skills.json

```

## 设计理念

The Scaffolding is token-efficient by design. Skills are loaded selectively, notes externalize state rather than consuming context, and LatticeMind's confidence scoring prevents the agent from reasoning over irrelevant findings. It runs fully locally or on cloud infrastructure depending on your setup.

## 负责任使用

This project is intended for security research and education. Only use it against systems you are authorized to test.

### 致谢

- [k3nn3dy-ai](https://github.com/k3nn3dy-ai) for [Kali MCP](https://github.com/k3nn3dy-ai/kali-mcp) —

an essential part of this harness that provides direct access to Kali

Linux security tooling through the Model Context Protocol.标签:AI 代理, Claude Code, Codex, Gemini, GitHub Copilot, GitHub MCP, Kali MCP, LatticeMind, MCP, OpenCode, Pwn, TGT, Web 安全, 上下文保持, 代理编排, 代码辅助, 安全工具链, 密码学, 手动系统调用, 技能加载, 持久化笔记, 插件式框架, 攻防演练, 模型上下文协议, 结构化侦察, 自动化渗透测试, 自改进文档, 请求拦截, 逆向工具