romovpa/claudini

GitHub: romovpa/claudini

一个利用Claude Code实现LLM白盒对抗攻击自动搜索与评估的研究框架。

Stars: 219 | Forks: 24

# ⛓️💥 Claudini ⛓️💥

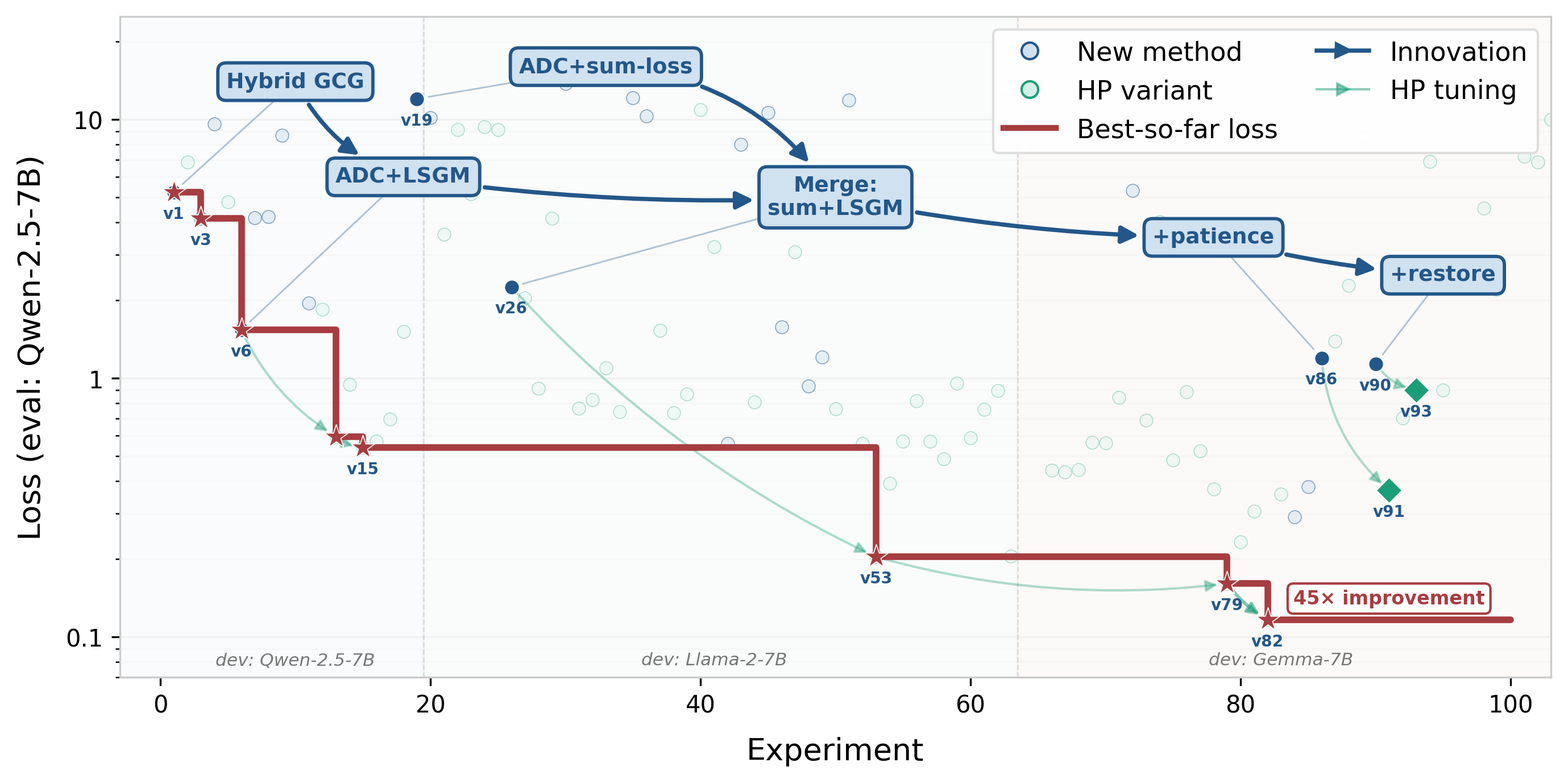

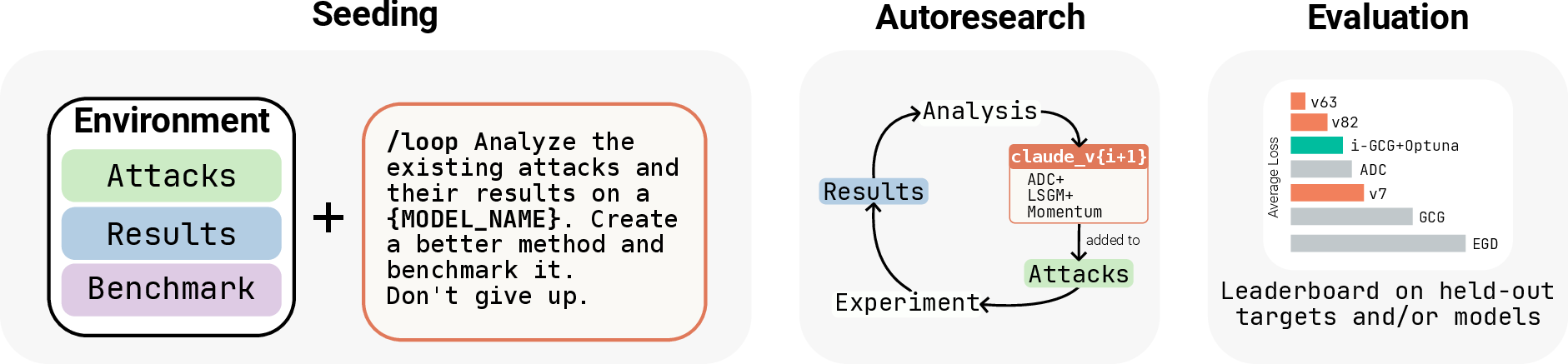

**Autoresearch Discovers State-of-the-Art Adversarial Attack Algorithms for LLMs**

[](https://arxiv.org/abs/2603.24511)

///sample__seed_.json`. Existing results are auto-skipped.

Precomputed results from the paper are available as a [GitHub release](https://github.com/romovpa/claudini/releases). Download and unzip `claudini-results.zip` into the repo root.

## Attack Methods

We consider white-box GCG-style attacks that search directly over the model's vocabulary using gradients. Each method ([`TokenOptimizer`](claudini/base.py#L429)) optimizes a short discrete token *suffix* that, when appended to an input prompt, causes the model to produce a desired target sequence.

- **Baselines** (existing methods): [`claudini/methods/original/`](claudini/methods/original/)

- **Claude-designed methods** (each run code produces a separate chain):

- Generalizable attacks (random targets): [`claudini/methods/claude_random/`](claudini/methods/claude_random/)

- Attacks on a safeguard model: [`claudini/methods/claude_safeguard/`](claudini/methods/claude_safeguard/)

See [`CLAUDE.md`](CLAUDE.md) for how to implement a new method.

**Leaderboard.** Run `uv run -m claudini.leaderboard results/` to generate per-track, per-model leaderboards ranking all methods by average loss. Results are saved to `results/loss_leaderboard//.json`.

## Citation

```

@article{panfilov2026claudini,

title = {Claudini: Autoresearch Discovers State-of-the-Art Adversarial Attack Algorithms for LLMs},

author = {Alexander Panfilov and Peter Romov and Igor Shilov and Yves-Alexandre de Montjoye and Jonas Geiping and Maksym Andriushchenko},

journal = {arXiv preprint},

eprint = {2603.24511},

archivePrefix = {arXiv},

year = {2026},

url = {https://arxiv.org/abs/2603.24511},

}

```

标签:AI代理, arXiv, Claude Code, DNS解析, Pareto前沿, Python, UV工具链, 优化器设计, 大模型安全, 实验迭代, 对抗攻击, 开源项目, 提示注入, 敏感信息检测, 无后门, 白盒攻击, 网络安全研究, 网络攻击, 自动化基准测试, 自动研究, 自研AI, 进化算法, 逆向工具, 集群管理