Recursive Language Models (RLMs)

Full Paper •

Blogpost •

Documentation •

RLM Minimal

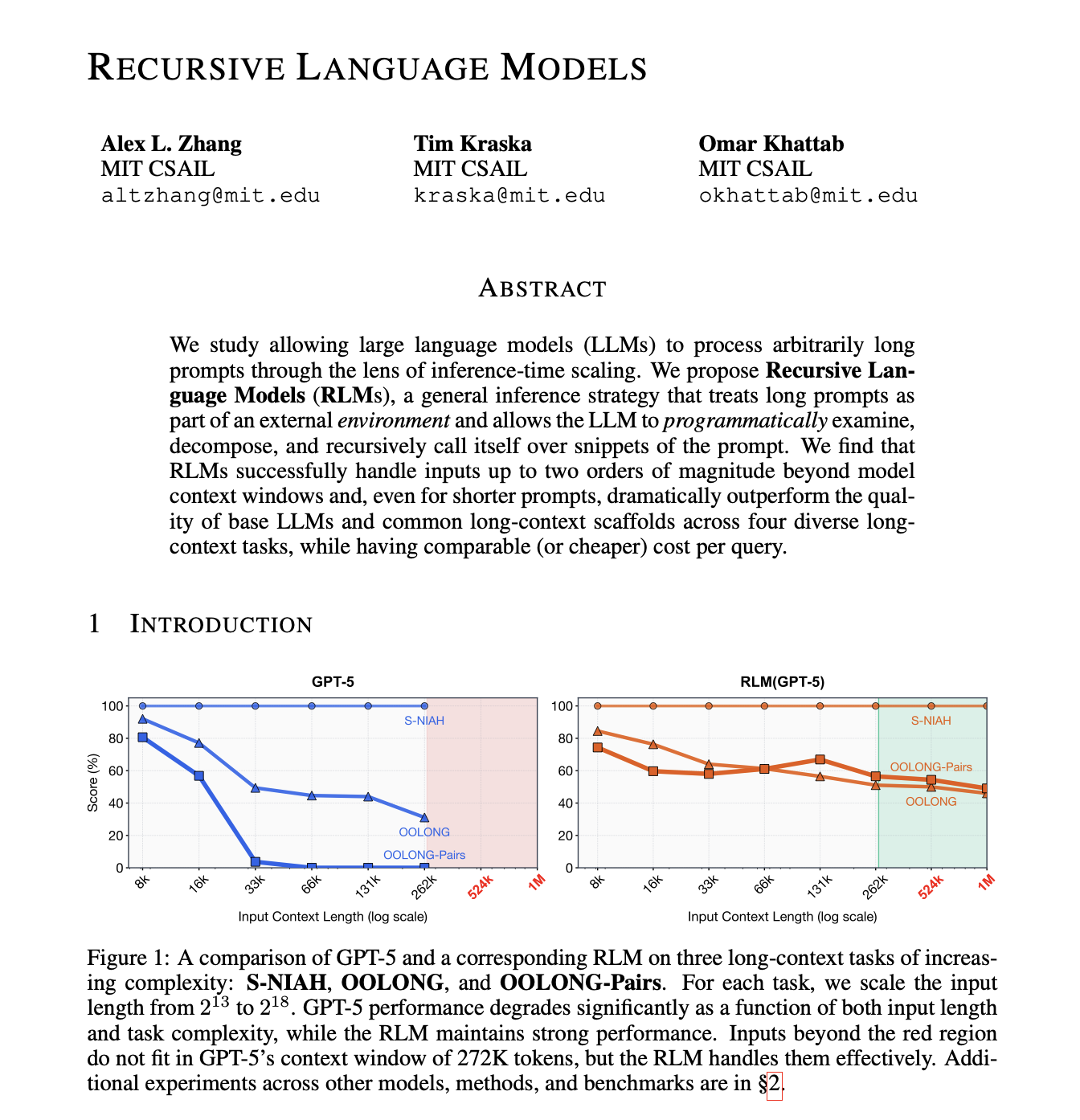

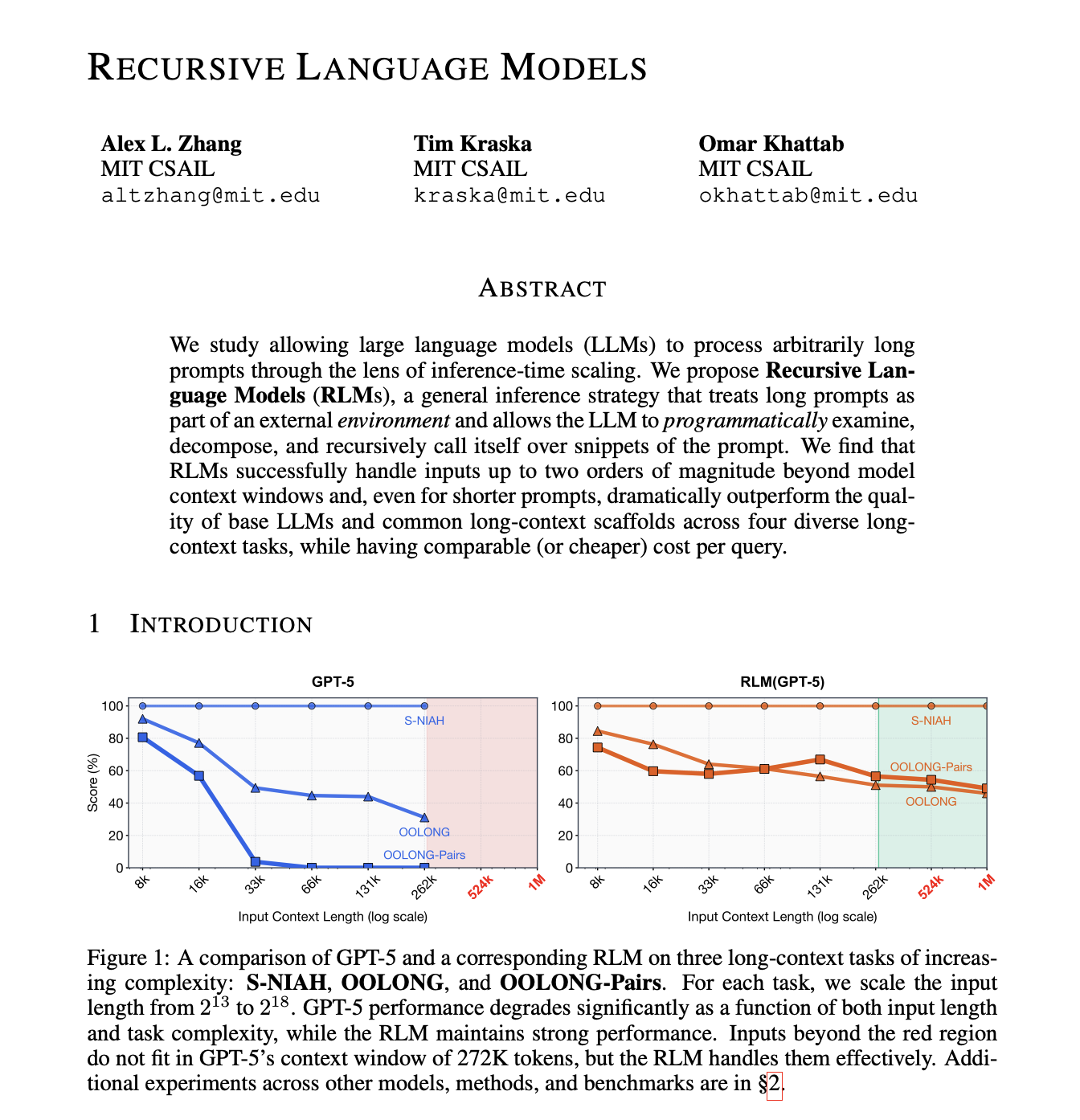

## Overview

Recursive Language Models (RLMs) are a task-agnostic inference paradigm for language models (LMs) to handle near-infinite length contexts by enabling the LM to *programmatically* examine, decompose, and recursively call itself over its input. RLMs replace the canonical `llm.completion(prompt, model)` call with a `rlm.completion(prompt, model)` call. RLMs offload the context as a variable in a REPL environment that the LM can interact with and launch sub-LM calls inside of.

This repository provides an extensible inference engine for using RLMs around standard API-based and local LLMs. The initial experiments and idea were proposed in a [blogpost](https://alexzhang13.github.io/blog/2025/rlm/) in 2025, with expanded results in an [arXiv preprint](https://arxiv.org/abs/2512.24601).

## Quick Setup

You can try out RLMs quickly by installing from PyPi:

pip install rlms

The default RLM client uses a REPL environment that runs on the host process through Python `exec` calls. It uses the same virtual environment as the host process (i.e. it will have access to the same dependencies), but with some limitations in its available global modules. As an example, we can call RLM completions using GPT-5-nano:

from rlm import RLM

rlm = RLM(

backend="openai",

backend_kwargs={"model_name": "gpt-5-nano"},

verbose=True, # For printing to console with rich, disabled by default.

)

print(rlm.completion("Print me the first 100 powers of two, each on a newline.").response)

Manual Setup

Set up the dependencies with `uv` (or your virtual environment of choice):

curl -LsSf https://astral.sh/uv/install.sh | sh

uv init && uv venv --python 3.12 # change version as needed

uv pip install -e .

This project includes a `Makefile` to simplify common tasks.

- `make install`: Install base dependencies.

- `make check`: Run linter, formatter, and tests.

To run a quick test, the following will run an RLM query with the OpenAI client using your environment variable `OPENAI_API_KEY` (feel free to change this). This will generate console output as well as a log which you can use with the visualizer to explore the trajectories.

make quickstart

## REPL Environments

We support two types of REPL environments -- isolated, and non-isolated. Non-isolated environments (default) run code execution on the same machine as the RLM (e.g. through `exec`), which is pretty reasonable for some local low-risk tasks, like simple benchmarking, but can be problematic if the prompts or tool calls can interact with malicious users. Fully isolated environments use cloud-based sandboxes (e.g. Prime Sandboxes, [Modal Sandboxes](https://modal.com/docs/guide/sandboxes)) to run code generated by the RLM, ensuring complete isolation from the host process. Environments can be added, but we natively support the following: `local` (default), `docker`, `modal`, `prime`, `daytona`, `e2b`.

rlm = RLM(

environment="...", # "local", "docker", "modal", "prime", "daytona", "e2b"

environment_kwargs={...},

)

### Local Environments

The default `local` environment `LocalREPL` runs in the same process as the RLM itself, with specified global and local namespaces for minimal security. Using this REPL is generally safe, but should not be used for production settings. It also shares the same virtual environment (e.g. Conda or uv) as the host process.

#### Docker

(*requires [Docker installed](https://docs.docker.com/desktop/setup/install/)*)

We also support a Docker-based environment called `DockerREPL` that launches the REPL environment as a Docker image. By default, we use the `python:3.11-slim` image, but the user can specify custom images as well.

### Isolated Environments

We support several different REPL environments that run on separate, cloud-based machines. Whenever a recursive sub-call is made in these instances, it is requested from the host process.

#### Modal Sandboxes

To use [Modal Sandboxes](https://modal.com/docs/guide/sandboxes) as the REPL environment, you need to install and authenticate your Modal account.

uv add modal # add modal library

modal setup # authenticate account

#### Prime Intellect Sandboxes

To use [Prime Sandboxes](https://docs.primeintellect.ai/sandboxes/sdk), install the SDK and set your API key:

uv pip install -e ".[prime]"

export PRIME_API_KEY=...

### Model Providers

We currently support most major clients (OpenAI, Anthropic), as well as the router platforms (OpenRouter, Portkey). For local models, we recommend using vLLM (which interfaces with the [OpenAI client](https://github.com/alexzhang13/rlm/blob/main/rlm/clients/openai.py)). To view or add support for more clients, start by looking at [`rlm/clients/`](https://github.com/alexzhang13/rlm/tree/main/rlm/clients).

## Relevant Reading

* **[Dec '25]** [Recursive Language Models arXiv](https://arxiv.org/abs/2512.24601)

* **[Oct '25]** [Recursive Language Models Blogpost](https://alexzhang13.github.io/blog/2025/rlm/)

If you use this code or repository in your research, please cite:

@misc{zhang2026recursivelanguagemodels,

title={Recursive Language Models},

author={Alex L. Zhang and Tim Kraska and Omar Khattab},

year={2026},

eprint={2512.24601},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2512.24601},

}

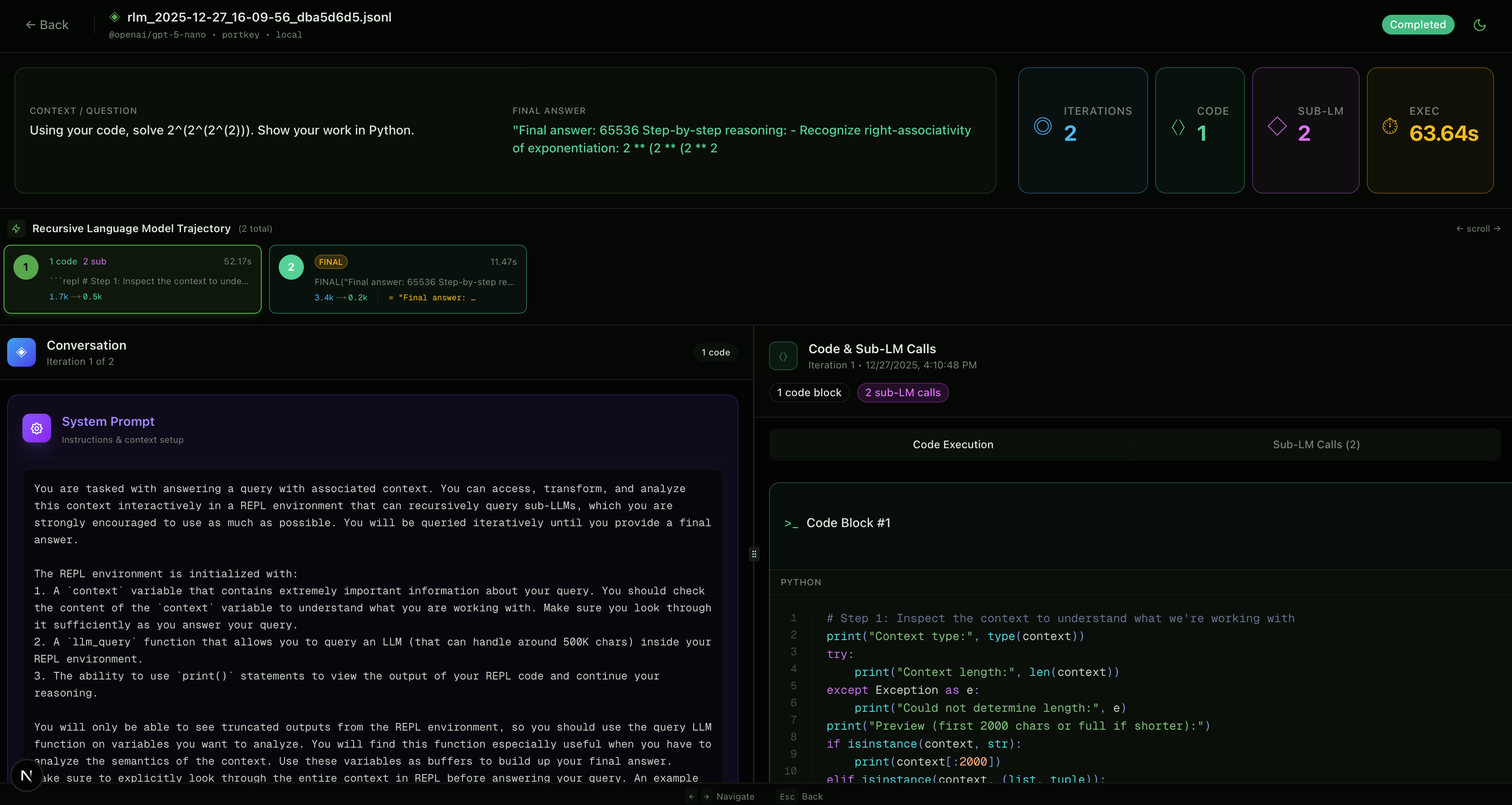

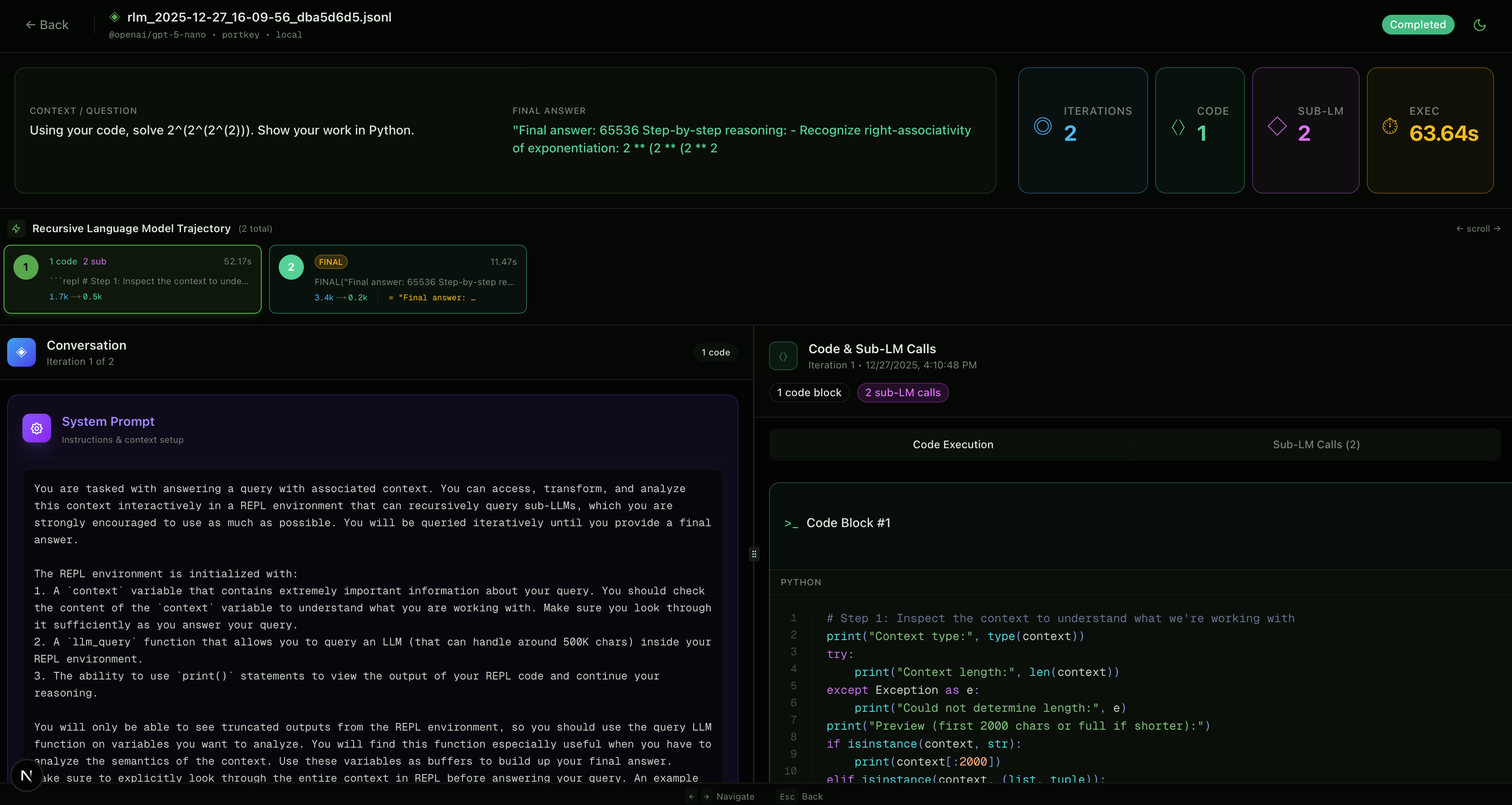

## Optional: Trajectory metadata and logging

`RLMChatCompletion` has an optional `metadata` field (default `None`) that holds the full trajectory (run config + all iterations and sub-calls) so you can reconstruct the run. Pass an `RLMLogger` to capture it:

- **In-memory only** (trajectory on `completion.metadata`): `logger=RLMLogger()` (no `log_dir`).

- **Also save to disk** (JSONL for the visualizer): `logger=RLMLogger(log_dir="./logs")`.

## Optional Debugging: Visualizing RLM Trajectories

We provide a simple visualizer to inspect code, sub-LM, and root-LM calls. Use `RLMLogger(log_dir="./logs")` so each completion writes a `.jsonl` file:

from rlm.logger import RLMLogger

from rlm import RLM

logger = RLMLogger(log_dir="./logs")

rlm = RLM(..., logger=logger)

To run the visualizer locally, we use Node.js and shadcn/ui:

cd visualizer/

npm run dev # default localhost:3001

You'll have the option to select saved `.jsonl` files